| layout | fancy_home |

|---|---|

| permalink | /publications-visual/ |

| title | Research Atlas |

Curated Research Map

Three streams drive my current research. AIGC systems push structured creativity, vision-language agents reason over complex scenes, and low-level restoration builds the reliable backbone beneath both. The gallery below highlights representative works in each space.

ICLR / CVPR / ICCV / AAAI

AIGC · VLM · Low-Level

Task-driven design

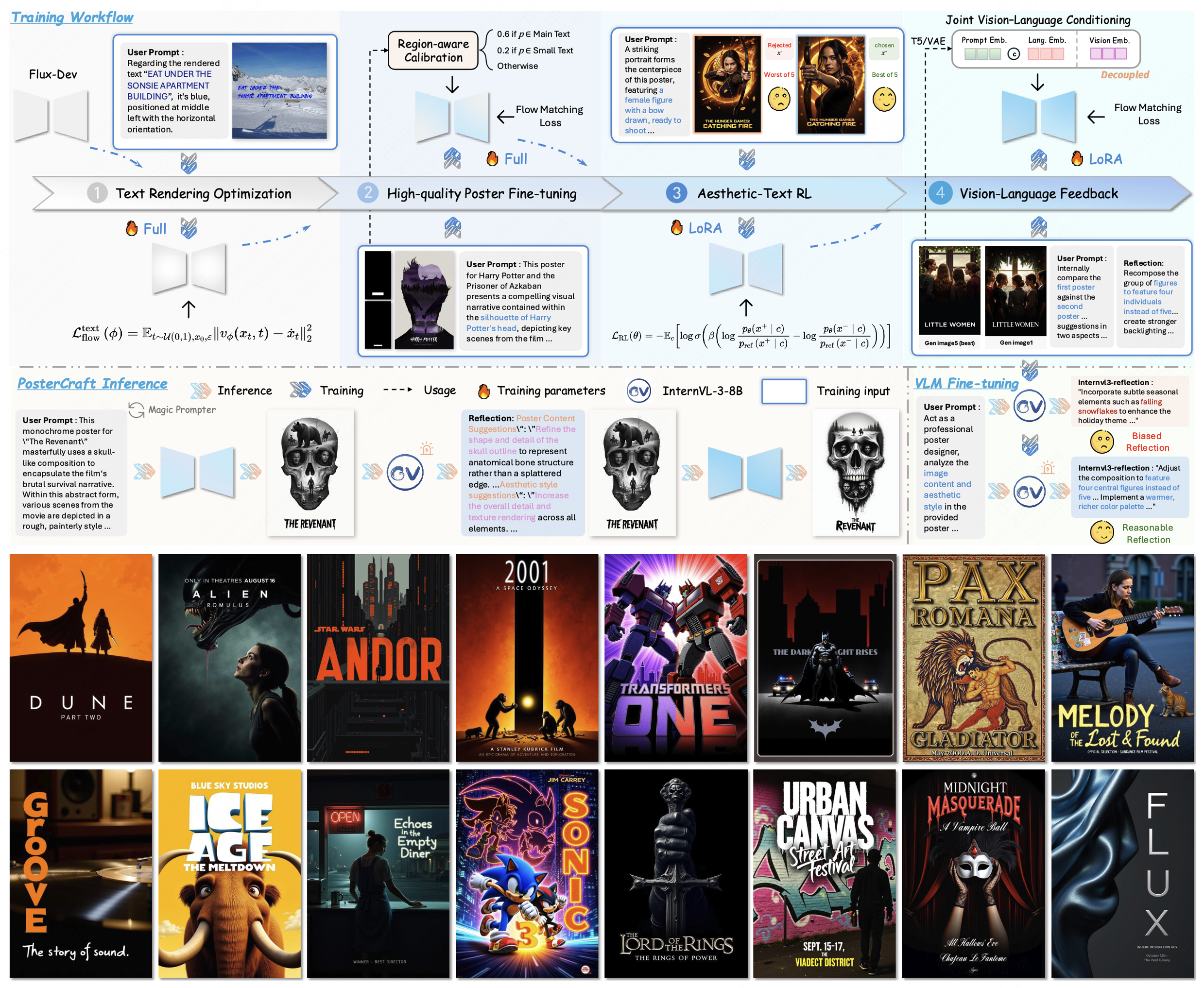

AIGC Systems

Unified workflows for controllable poster generation, combining task distillation, reward modeling, and data curation.

A generalist system that distills local editing, global layout, and reward feedback into a single controllable workflow with public demos and datasets.

Accepted by ICLR'26, PosterCraft couples layout planning and stylized diffusion to translate prompts directly into production-ready posters with consistent typography.

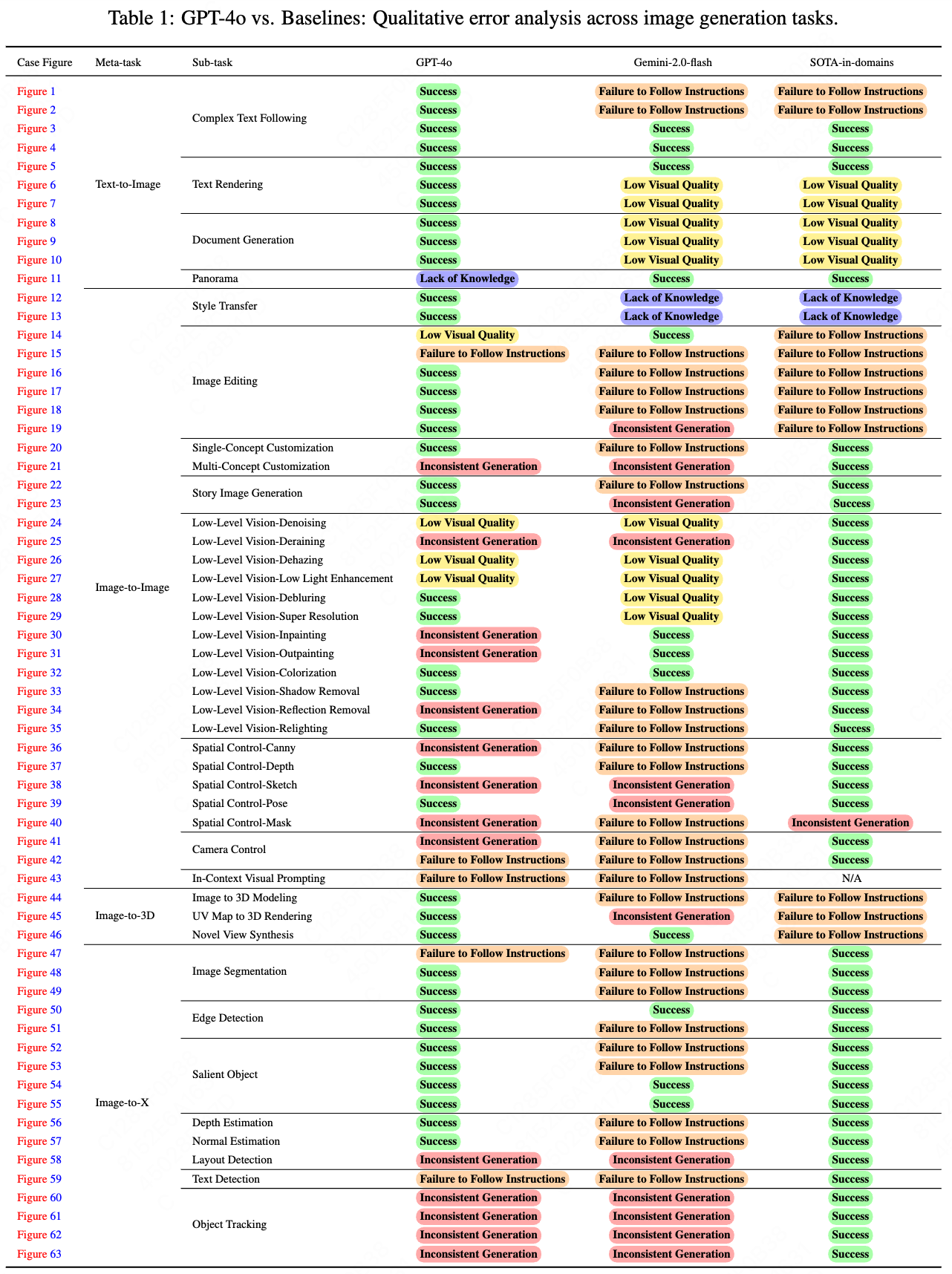

A 360° evaluation of GPT-4o image generation, benchmarking fidelity, controllability, and safety to guide industrial adoption.

Vision-Language & Agents

MLLMs and autonomous agents coordinate perception, planning, and feedback loops for adverse-weather driving and iterative data engines.

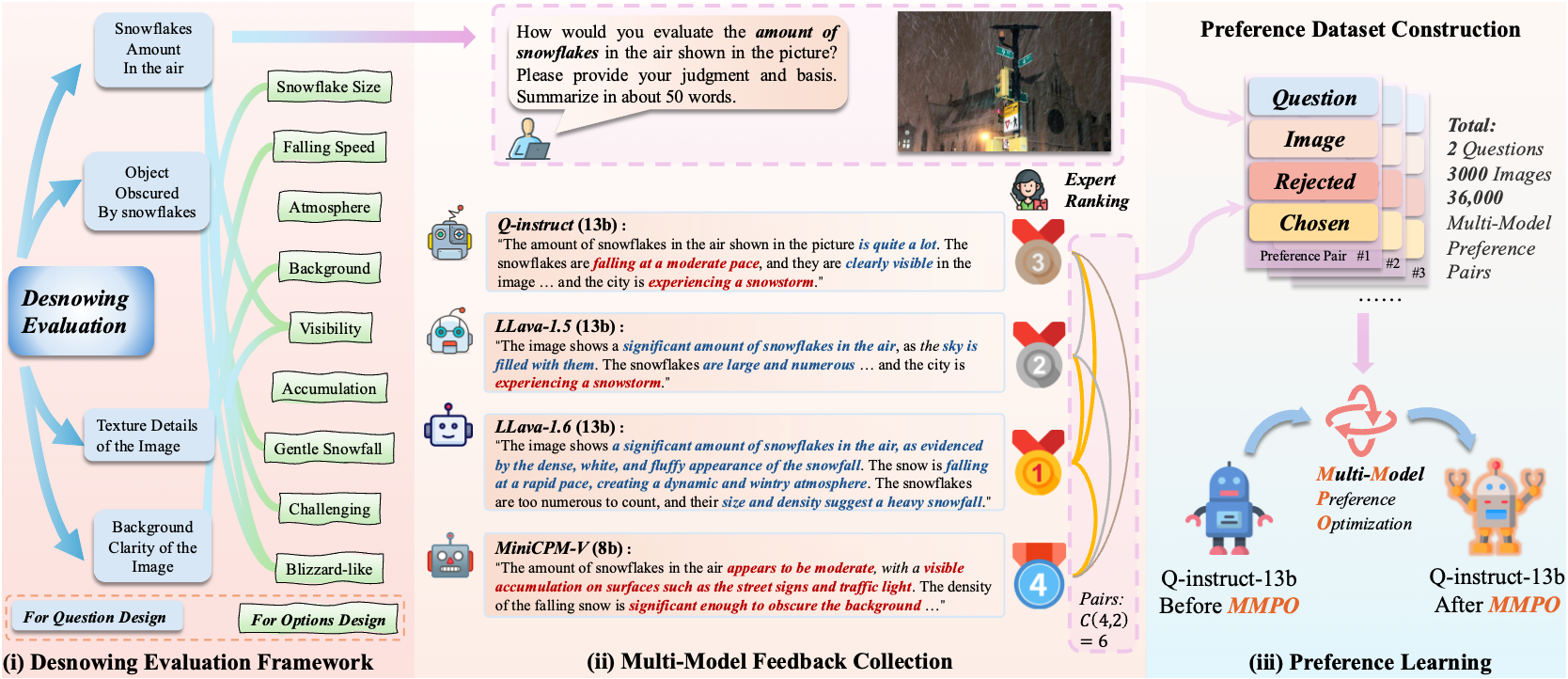

SnowMaster — Comprehensive Real-world Image Desnowing via MLLM with Multi-Model Feedback Optimization

Combines reasoning from multiple language-vision experts with visual priors to schedule desnowing operations that adapt to scene structure.

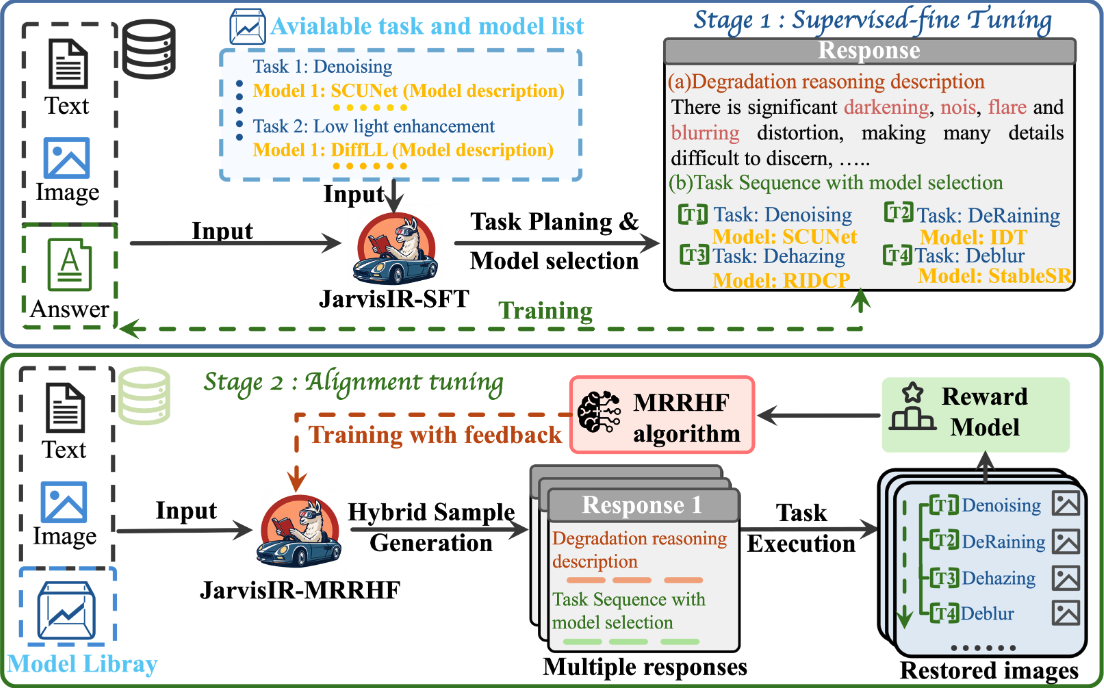

An intelligent restoration agent that reasons via MLLM dialogs, calling specialized tools to deweather automotive perception stacks.

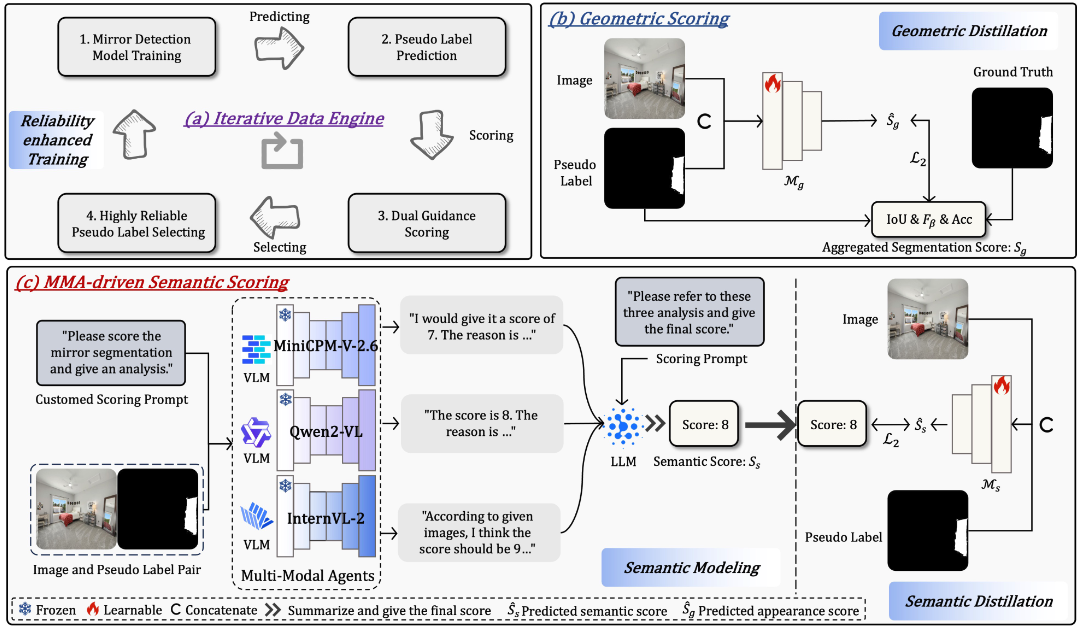

Uses agentic feedback and large-scale pseudo-labeling to tackle mirror detection, providing a blueprint for general VLM data refinement.

Low-Level Vision

Pairing generative priors with physical constraints produces reliable de-weathering, low-light enhancement, and deraining solutions.

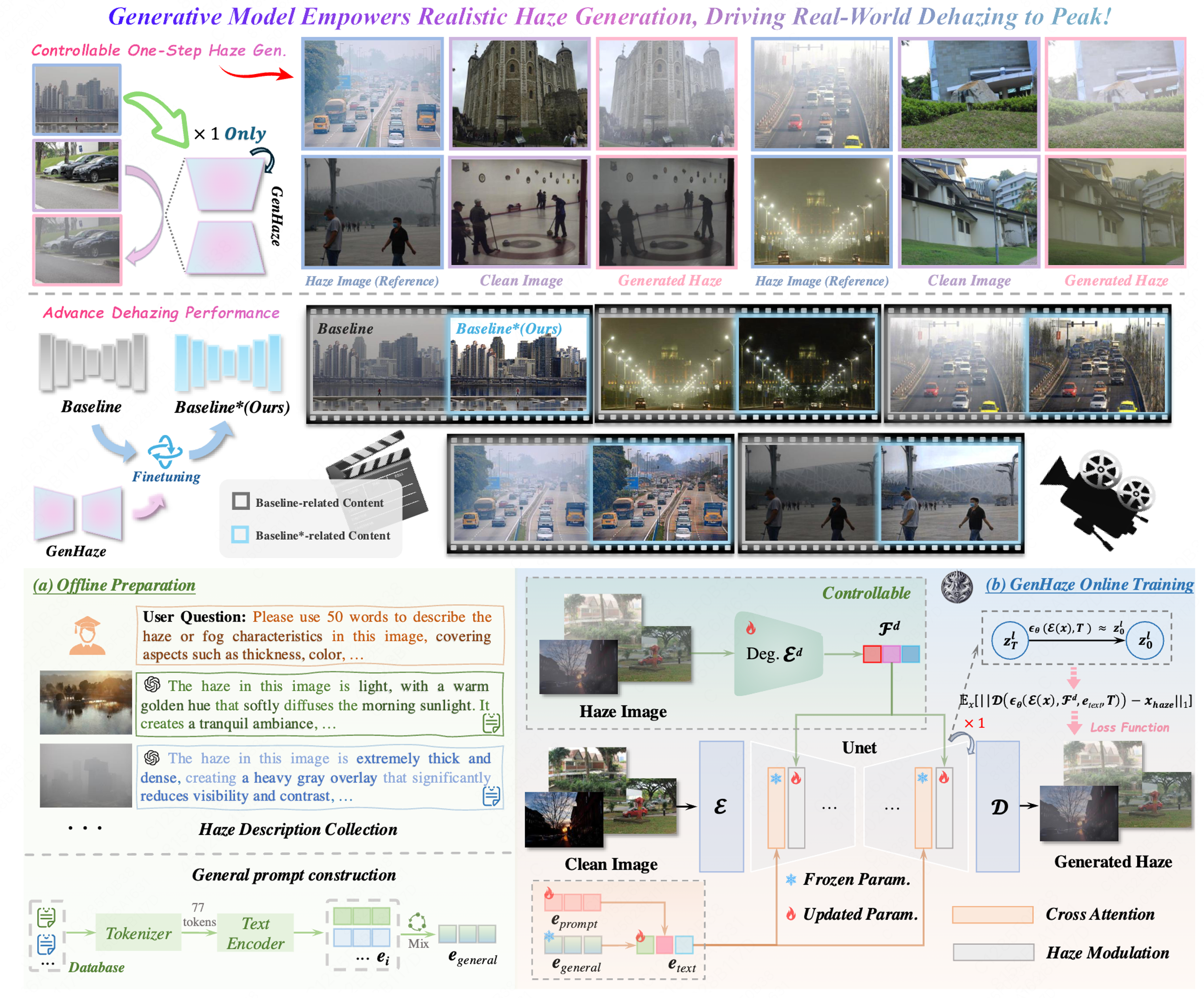

Provides a generative counterpart to dehazing by synthesizing paired data with explicit physical controls, enabling better restoration agents.

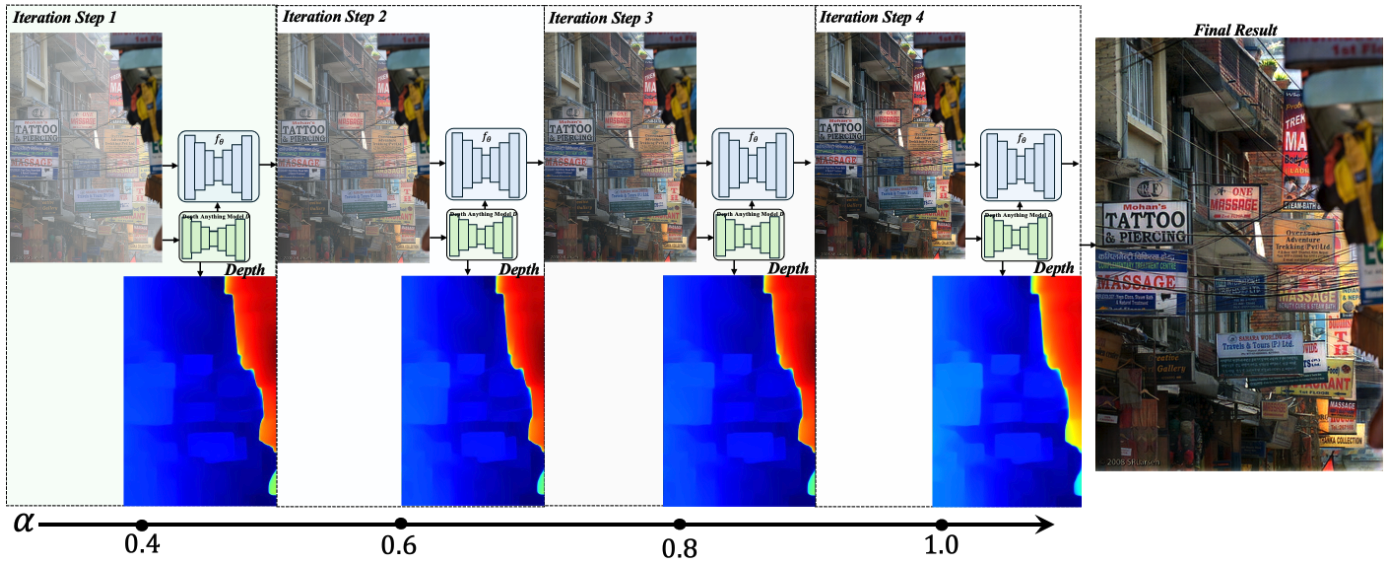

Aligns promptable depth priors with restoration networks, achieving plug-and-play robustness across weather distributions.

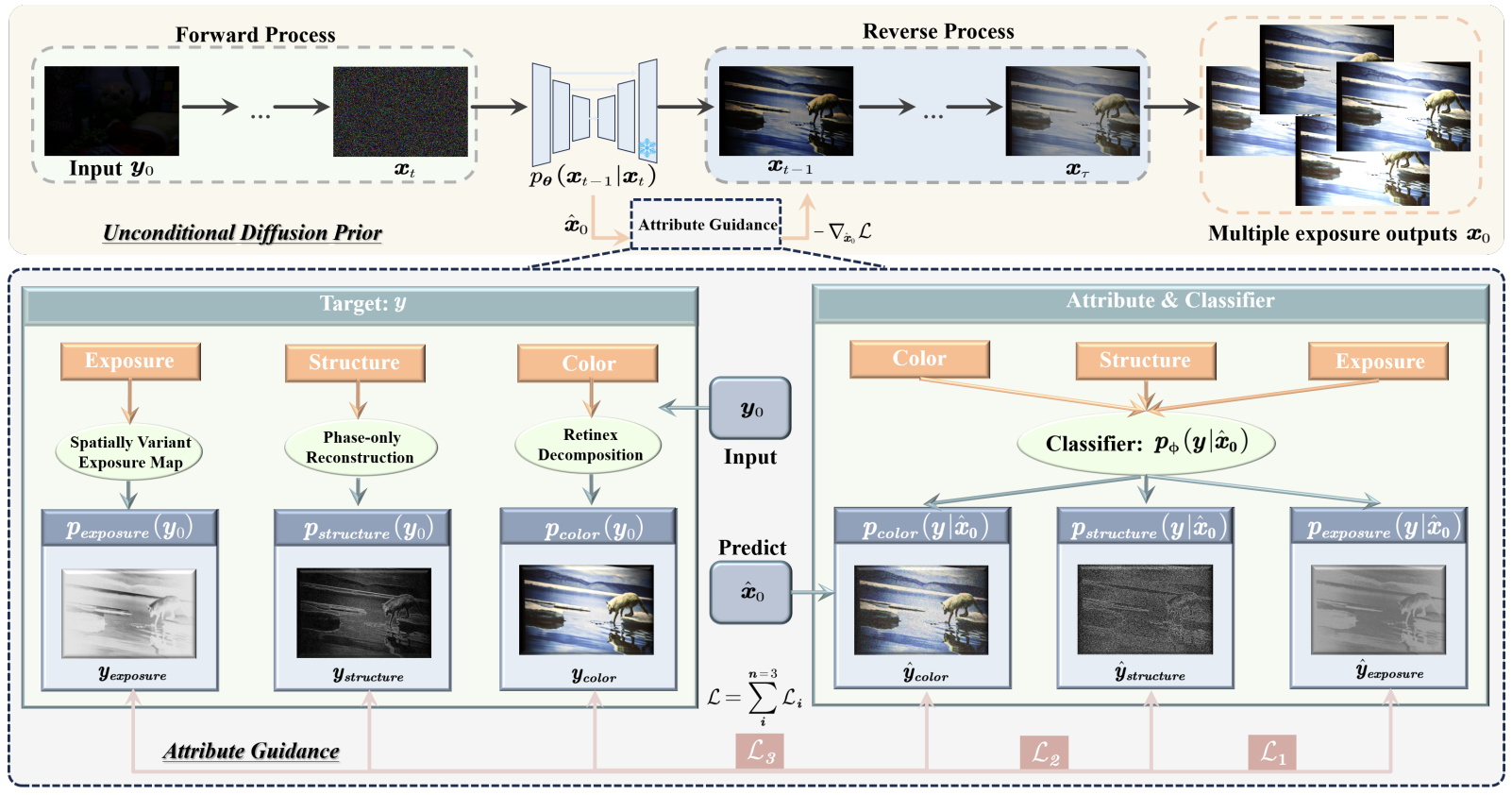

Introduces adaptive guidance so diffusion models can enhance low-light scenes without paired training data.