diff --git a/.gitignore b/.gitignore

index b2d6de3..4324211 100644

--- a/.gitignore

+++ b/.gitignore

@@ -18,3 +18,7 @@

npm-debug.log*

yarn-debug.log*

yarn-error.log*

+

+# R files

+.Rproj.user

+.Rproj

diff --git a/docs/docs/03_data-collection/03_00_platform-specific guidelines/03_04_data-collection_meta_ads.mdx b/docs/docs/03_data-collection/03_00_platform-specific guidelines/03_04_data-collection_meta_ads.mdx

new file mode 100644

index 0000000..3175807

--- /dev/null

+++ b/docs/docs/03_data-collection/03_00_platform-specific guidelines/03_04_data-collection_meta_ads.mdx

@@ -0,0 +1,794 @@

+---

+title: "Data Collection of Facebook and Instagram Ads"

+---

+

+

+

+

+

+

+

+

+

+

+## Introduction

+

+Meta’s Ad Library provides a public record of ads that run on Facebook

+and Instagram. Researchers, journalists, and civic watchdogs can use

+this data to analyze advertising trends, for example, tracking political

+campaign ads, spending, and the reached demographics. The Meta Ad

+Library API offers programmatic access to these ads, enabling retrieval

+of detailed ad content and performance information. Each ad entry

+includes metadata such as the ad’s text, the advertiser’s page, the time

+period it ran, the amount spent (as a range), impressions delivered

+(also as a range), and breakdowns of the audience by age, gender, and

+region. Importantly, many metrics are given as ranges (min–max) rather

+than precise values. This tutorial will demonstrate how to use R (with

+the `tidyverse` ecosystem) and the `Radlibrary` R package to access the

+Meta Ad Library via its official API. We will walk through obtaining API

+access, constructing queries to find ads (by keyword or page id),

+retrieving ad data, and performing analyses such as ad volume and spend

+over time, top advertisers, and demographic targeting patterns.

+

+### Step 1: Setting Up API Access (Verification & Developer Account)

+

+Before writing any code, you need to secure access to the Ad Library

+API. Meta requires a few one-time setup steps:

+

+1. **Confirm your identity and location**: Facebook mandates an [ID

+ verification process](https://www.facebook.com/id/hub) for anyone

+ accessing political ad data (the same process required to run

+ political ads). You will need to provide a government ID and proof

+ of your country. This can take 1–2 days for approval.

+

+

+

+

+2. **Create a Facebook Developer account**: Go to the Facebook for

+ Developers portal and sign up with your Facebook account (if you

+ haven’t already). Agree to any platform policies as needed. Once you

+ have a developer account, create a new “App” in the dashboard

+ (choose Business or Custom app type for this

+ purpose). This app is just a container to obtain API credentials.

+

+3. **Generate an access token**: The Ad Library API is accessed via

+ Meta’s Graph API. The simplest way to get a token is by using the

+ [Graph API Explorer](https://developers.facebook.com/tools/explorer)

+ tool. Once you are on the Graph API Explorer page, generate a user

+ access token. You need to add the permission `ads_read` in the token

+ generation dialog so that the token is authorized to query the ads

+ archive. Once generated, copy this token for use in R. Keep it

+ confidential and treat it like a password – anyone with this token

+ could potentially query the API on your behalf until it expires.

+

+> Token expiration: By default, tokens from the Explorer are

+> [short-lived](https://developers.facebook.com/docs/facebook-login/guides/access-tokens#termtokens)

+> (usually ~1-2 hours). For short analysis sessions that might be

+> sufficient, but in most cases you will likely need longer access. You

+> can exchange the short-lived token for a 60-day token using your App’s

+> App ID and App Secret. In this tutorial, we will proceed with a

+> short-lived token for simplicity, but it is strongly encouraged to get

+> a long-term token for your analysis (holds for 60 days). For

+> instructions on how to do this, refer to the [official Meta

+> documentation on access

+> tokens](https://facebookresearch.github.io/Radlibrary/articles/Radlibrary.html#generating-persistent-tokens).

+

+### Step 2: Installing and Loading R Packages

+

+We will use an R package called `Radlibrary` (by Meta’s Facebook

+Research team) to interact with the Ad Library API. `Radlibrary` is a

+convenient wrapper that handles authentication and query construction,

+saving us from crafting raw Graph API calls (which we could also do if

+we feel fancy like that). It also helps format results into tidy data

+frames. In addition, we will use the `tidyverse` for data manipulation

+(`dplyr`, `tidyr`) and `ggplot2` for visualization. Finally, we will use

+my very own package,

+[`metatargetr`](www.github.com/favstats/metatargetr) to retrieve some ad

+spending data. If you haven’t installed these packages, do so first:

+

+``` r

+# Install Radlibrary from GitHub (it’s not on CRAN as of writing)

+if(!("pak" %in% installed.packages())){

+ install.packages("pak") # if devtools not already installed

+}

+```

+

+``` r

+# Install Radlibrary

+pak::pak("facebookresearch/Radlibrary")

+# Install metatargetr

+pak::pak("favstats/metatargetr")

+# Install lubridate

+pak::pak("lubridate")

+# Install tidyverse if not already (includes dplyr, ggplot2, etc.)

+pak::pak("tidyverse")

+```

+

+``` r

+# Load the libraries in your R session

+library(Radlibrary)

+library(metatargetr)

+library(lubridate) # for convenient date functions

+library(tidyverse)

+```

+

+Make sure `Radlibrary` installed successfully (you might need to update

+Rtools on Windows or install additional library on Linux distributions).

+Now you are ready to use the Meta Ad Library API in R!

+

+### Step 3: Authenticating with your Access Token

+

+With your user access token in hand (from Step 1), you need to provide

+it to `Radlibrary` so it can authenticate API requests. **As a general

+rule, never hard-code the token directly in scripts.** One safe approach

+is to use R’s readline() function to paste the token interactively (this

+avoids storing it in your R command history):

+

+``` r

+# Prompt for the token (paste your token string at the prompt that appears)

+token <- readline(prompt = "Enter your Facebook API access token: ")

+```

+

+When you run this, R will pause and let you paste the token. Hit Enter

+and it will be stored in the token variable for use. This method ensures

+the token is not visible in your script or R history.

+

+**Optionally:**

+

+You can also save the token as an environment variable like this:

+

+``` r

+# Set the token as an environment variable

+Sys.setenv(META_API_TOKEN = token)

+```

+

+Now, in the rest of your script, you can retrieve your token like this

+*but only after you have restarted your R session*:

+

+``` r

+token <- Sys.getenv("META_API_TOKEN")

+```

+## Querying the Ad Library API

+

+### Step 4: Building a Query to the Ad Library API (`adlib_build_query`)

+

+Now we get to the moment we have been waiting for – how to get the data!

+The Ad Library API requires specifying what ads you want to retrieve.

+This is done by constructing a query with various parameters. The

+`Radlibrary` function `adlib_build_query()` helps create this query

+object.

+

+We will start with a simple example scenario: Suppose we want to find

+ads related to the climate in the runup to the 2025 German parliamentary

+elections. We are interested in all such ads (whether currently active

+or inactive) that were shown three weeks before election day but only

+those that were classified or self-identified as political or issue ads.

+

+First, we specifiy a list of all the variables that we would like to

+retrieve, here I use all available variables as of June 2025.

+

+``` r

+## First we

+ad_fields <- c(

+ ## some meta info and unique identifier

+ "page_id", "page_name",# "id", # id is added automatically

+ ## general info, text, description, run times

+ "ad_creation_time", "ad_delivery_start_time", "ad_delivery_stop_time",

+ "ad_creative_bodies", "ad_creative_link_captions", "ad_creative_link_descriptions",

+ "ad_creative_link_titles", "ad_snapshot_url", "languages", "publisher_platforms",

+ ## spending info

+ "currency", "spend", "bylines", "beneficiary_payers",

+ ## delivery and reach

+ "delivery_by_region", "demographic_distribution",

+ "estimated_audience_size", "impressions",

+ # "br_total_reach", # unique reach (only available for Brazil)

+ ## EU only

+ "eu_total_reach", "age_country_gender_reach_breakdown",

+ "target_ages", "target_gender", "target_locations"

+)

+```

+

+Now we are ready to build the query step by step:

+

+``` r

+# Build an Ad Library API query for ads related to "climate" in Germany during 2025 election

+query <- adlib_build_query(

+ ad_reached_countries = "DE", # country where ads were delivered

+ ad_delivery_date_min = "2025-02-03", # specify minimum date: 21 days before election day

+ ad_delivery_date_max = "2025-02-23", # specify maximum date: election day

+ ad_active_status = "ALL", # include both active and inactive ads

+ search_terms = "klima", # keywords to search in ad text or metadata

+ ad_type = "POLITICAL_AND_ISSUE_ADS", # restrict to political/issue ads

+ fields = ad_fields, # data fields we want

+ limit = 200 # number of results per page (max 1000)

+)

+```

+

+> **Note**: You might encounter the following warning:

+> *Warning: Unsupported fields supplied:* followed by a list of

+> parameters.

+> This warning can be safely ignored. The `Radlibrary` package, despite

+> being developed by the Facebook team, may not be up to date with the

+> newest parameters.

+

+------------------------------------------------------------------------

+

+#### Parameter Breakdown

+

+Let us unpack the parameters used in the API query (and some additional

+ones):

+

+

+ **`ad_reached_countries`**

+ Specifies the countries where the ads were delivered.

+

+ For example, setting this to `"DE"` retrieves ads delivered in

+ Germany.

+ At least one country code must be specified. Multiple countries can be

+ provided as a vector, e.g., `c("US", "CA")`.

+

+

+

+

+

+ **`ad_delivery_date_min`** and **`ad_delivery_date_max`**

+ Define the date range for when the ads were delivered.

+

+The format should be `"YYYY-MM-DD"`.

+For instance, setting `ad_delivery_date_min = "2025-02-22"` and

+`ad_delivery_date_max = "2025-02-23"` retrieves ads delivered between

+February 22 and February 23, 2025.

+

+

+

+ **`ad_active_status`** determines the delivery status of the ads to retrieve.

+

+If not specified, the default is `"ACTIVE"`, which returns only

+currently active ads.

+For historical analysis, setting this to `"ALL"` retrieves both active

+and inactive ads.

+

+Valid values:

+- `"ALL"`: all ads, past and present

+- `"ACTIVE"`: only currently running ads

+- `"INACTIVE"`: only ads that have stopped running

+

+

+

+ **`search_terms`** is a keyword or phrase to search within the ad’s content, title, or disclaimer text.

+

+The API treats a blank space as a logical `AND` and searches for both

+terms without other operators.

+For example, `"climate change"` is interpreted as `"climate"` AND

+`"change"`.

+To search for an exact phrase, use the `search_type` parameter set to

+`"KEYWORD_EXACT_PHRASE"`.

+

+

+

+ **`search_page_ids`** is an optional alternative to `search_terms` and retrieves ads from a specific Facebook Page.

+

+Provide the numeric Page ID (e.g., `"1234567890"`).

+This is ideal when focusing on a particular advertiser’s metadata and

+content.

+

+You can find page IDs via:

+- The Ad Library API (just query it by `search_terms` as we show below and take note of a page id of interest).

+- Download spending reports in the [Ad Library Report](https://www.facebook.com/ads/library/report/) which includes spending by page id.

+- In the URL of an Ad Library page, i.e. after the `view_all_page_id` URL parameter. For example:

+ [https://www.facebook.com/ads/library/?view_all_page_id=179587888720522](https://www.facebook.com/ads/library/?view_all_page_id=179587888720522) is the Ad Library Page for the U.S. Department of Homeland Security and *179587888720522* is the page id.

+

+

+

+ **`ad_type`** specifies the category of ads to retrieve.

+

+Valid values include:

+- `"ALL"`: Retrieves all ads, regardless of category.

+- `"POLITICAL_AND_ISSUE_ADS"`

+- `"EMPLOYMENT_ADS"`

+- `"HOUSING_ADS"`

+- `"FINANCIAL_PRODUCTS_AND_SERVICES_ADS"`

+

+

+

+ **`fields`** determines what information about each ad will be returned.

+

+In our example, we request specific fields defined in the `ad_fields`

+variable.

+The fields are categorized as follows:

+

+- **Meta Information and Identifiers**:

+ - `"page_id"`: Unique identifier for the Facebook Page.

+ - `"page_name"`: Name of the Facebook Page.

+- **General Information**:

+ - `"ad_creation_time"`: Time when the ad was created.

+ - `"ad_delivery_start_time"`: Start time of the ad delivery.

+ - `"ad_delivery_stop_time"`: Stop time of the ad delivery.

+ - `"ad_creative_bodies"`: Main text content of the ad.

+ - `"ad_creative_link_captions"`: Captions in the call-to-action section.

+ - `"ad_creative_link_descriptions"`: Descriptions in the call-to-action section.

+ - `"ad_creative_link_titles"`: Titles in the call-to-action section.

+ - `"ad_snapshot_url"`: URL to a snapshot of the ad.

+ - `"languages"`: Languages used in the ad.

+ - `"publisher_platforms"`: Platforms where the ad was published (e.g., Facebook, Instagram).

+- **Spending Information**:

+ - `"currency"`: Currency used for the ad spend.

+ - `"spend"`: Amount spent on the ad.

+ - `"bylines"`: Bylines associated with the ad.

+ - `"beneficiary_payers"`: Entities that paid for the ad.

+- **Delivery and Reach**:

+ - `"delivery_by_region"`: Regional delivery information.

+ - `"demographic_distribution"`: Demographic breakdown of the ad’s audience.

+ - `"estimated_audience_size"`: Estimated size of the audience.

+ - `"impressions"`: Number of times the ad was displayed.

+- **EU-Specific Fields**:

+ - `"eu_total_reach"`: Total reach within the European Union.

+ - `"age_country_gender_reach_breakdown"`: Breakdown of reach by age, country, and gender.

+ - `"target_ages"`: Targeted age groups.

+ - `"target_gender"`: Targeted genders.

+ - `"target_locations"`: Targeted locations.

+

+These fields provide comprehensive information about each ad, including

+its content, delivery, and audience targeting. For more info, you can

+check the [Meta Ad Library API documentation](https://www.facebook.com/ads/library/api/).

+

+

+

+ **`limit`** limits the number of results per API call.

+

+The default value is 25, and the maximum is 1,000.

+If your query could return more, you will need to paginate (more about

+that later).

+For now, we assume `100` is sufficient for demonstration purposes.

+

+

+------------------------------------------------------------------------

+

+#### Next Step

+

+At this point, we have only created a **query object**, a structured

+list containing all parameters. **The query has not yet been sent** to

+Meta.

+

+The function `adlib_build_query()` only constructs the query. You can

+inspect it by printing `query`, which will show its components and the

+exact URL to be called.

+

+Let us now proceed to **fetch the data**.

+

+### Step 5: Retrieving Ad Data from the API (`adlib_get`)

+

+To execute the query and get results, we use `Radlibrary`’s function

+`adlib_get()`. This function takes our query and the access token, sends

+the request to Meta’s Graph API, and returns the response. Let’s call

+it:

+

+``` r

+# Execute the query and retrieve data

+result <- adlib_get(query, token = token)

+```

+

+Under the hood, this hits the Graph API’s /ads_archive endpoint with all

+the parameters we specified. The result we get back is an object of

+class `adlib_data_response`. It contains the data and some metadata.

+

+``` r

+glimpse(result$data[[1]], max.level = 1)

+```

+```

+ $ id : chr "547343151714655"

+ $ page_id : chr "530858850114749"

+ $ page_name : chr "Undone Work GmbH"

+ $ ad_creation_time : chr "2025-02-23"

+ $ ad_delivery_start_time : chr "2025-02-23"

+ $ ad_delivery_stop_time : chr "2025-02-28"

+ $ ad_creative_bodies :List of 1

+ $ ad_creative_link_captions :List of 1

+ $ ad_snapshot_url : chr "https://www.facebook.com/ads/archive/render_ad/?id=547343151714655&access_token=XXXX"| __truncated__

+ $ languages :List of 1

+ $ publisher_platforms :List of 1

+ $ currency : chr "EUR"

+ $ spend :List of 2

+ $ bylines : chr "Undone Work GmbH"

+ $ beneficiary_payers :List of 1

+ $ delivery_by_region :List of 16

+ $ demographic_distribution :List of 15

+ $ estimated_audience_size :List of 1

+ $ impressions :List of 2

+ $ eu_total_reach : int 616

+ $ age_country_gender_reach_breakdown:List of 1

+ $ target_ages :List of 2

+ $ target_gender : chr "All"

+ $ target_locations :List of 1

+```

+

+Now that result is in hand, let’s convert it into a more

+analysis-friendly format.

+

+### Step 6: Converting to a Tidy Data Frame

+

+Radlibrary provides an S3 method to turn the result into a tibble (a

+tidyverse-friendly data frame). We simply use as_tibble():

+

+``` r

+ads_df <- as_tibble(result, censor_access_token = TRUE)

+```

+

+By default, we include `censor_access_token = TRUE` to strip out the

+token from any embedded URLs in the data (this is a safety measure so we

+don’t accidentally reveal our token when inspecting data). Now `ads_df`

+is a tibble where each row is one ad and each column is a variable

+returned by the API.

+

+If you want to check the columns, try `glimpse(ads_df)` or

+`names(ads_df)` to inspect the structure of the `ads_df` data frame.

+Some of the key columns include: - `impressions_lower`,

+`impressions_upper`: The estimated range of impressions delivered. -

+`spend_lower`, `spend_upper`: The estimated range of ad spend in the

+currency used - `demographic_distribution`: A so-called list-column

+containing, for each ad, a data frame of demographic percentages (since

+we asked for it). We will explore how to work with this column in Step

+9.

+

+### Step 7: Handling Pagination for Larger Datasets (`paginate_meta_api`)

+

+The Meta Ad Library API returns only a limited number of ads per

+request. To retrieve more than the default amount, you need to handle

+pagination by following the `next_page` links provided in the API

+response.

+

+While the `Radlibrary` package offers the `adlib_get_paginated()`

+function to assist with pagination, it unfortunately does NOT handle

+rate limiting or delays between requests. To address this, I have

+implemented a custom function, `paginate_meta_api()`, which automates

+pagination and includes logic to manage API rate limits by introducing

+appropriate delays between requests. Specify `max_pages`, i.e. how many

+iterations you want to go through and also whether it should print

+update while retrieving data `verbose = TRUE`, and API usage limits

+`api_health = TRUE` (by default `FALSE`).

+

+Here is how you can use the custom function:

+

+``` r

+# Load the custom pagination function

+source("https://gist.githubusercontent.com/favstats/ac37f6a7c881dddfa1c156bfb3e2dbdf/raw/b49e3f73881a4595309480e418658e018fbd0980/paginate_meta_api.R")

+

+# Retrieve all pages with delay logic

+climate_ads <- paginate_meta_api(query, token, max_pages = 100, verbose = FALSE, api_health = FALSE)

+```

+

+At this stage, we have a data frame `climate_ads` of all retrieved ads

+and their metadata. We can now perform analysis on this data. Let us

+tackle a few common analysis tasks one by one.

+

+## Analzing the Data

+

+### Step 8: Analyzing Ad Volume and Top Advertisers

+

+A basic question is how the number of ads changes

+over time. For example, did advertising surge closer to election day? We

+can visualize the number of ads in our dataset by date by using the ad

+delivery start date as the date an ad “entered” the library (since if an

+ad is active for multiple days, it is counted on the first day it ran).

+Let us create a time series of ad count by day:

+

+``` r

+climate_ads %>%

+ mutate(start_date = as.Date(ad_delivery_start_time)) %>% # extract date portion

+ count(start_date) %>%

+ ggplot(aes(x = start_date, y = n)) +

+ geom_line(color = "steelblue") +

+ labs(x = "Date", y = "Number of Ads Started",

+ title = "Daily Count of New Ads in Ad Library (\"climate\" query in Germany)") +

+ theme_minimal()

+```

+

+

+

+

+This code groups ads by their start date and counts them, then plots a

+line graph. The result shows that we retrieved much more data than we

+had specified. This sometimes happens – the API is not perfect. We

+filter to include only data within the specified timeframe.

+

+``` r

+climate_ads %>%

+ mutate(start_date = as.Date(ad_delivery_start_time)) %>% # extract date portion

+ count(start_date) %>%

+ filter(start_date >= as.Date("2025-02-03")) %>%

+ ggplot(aes(x = start_date, y = n)) +

+ geom_line(color = "darkgreen") +

+ labs(x = "Date", y = "Number of Ads Started",

+ title = "Daily Count of New Ads in Ad Library (\"climate\" query in Germany)") +

+ theme_minimal()

+```

+

+

+

+

+Another limitation with counting ads is that the ads listed in the ad

+library do not represent unique ads, but rather ad runs. If an

+advertiser runs the same ad again with some changes in settings, it will

+be counted as a separate ad. This may overinflate the number of unique

+ads. One possible way to address this is to filter for unique texts

+(e.g. `ad_creative_bodies`).

+

+Another approach is to aggregate by spending, which gives a sense of

+where the advertiser’s focus lies.

+

+``` r

+climate_ads %>%

+ mutate(keyword = "climate") %>%

+ mutate(start_date = as.Date(ad_delivery_start_time)) %>% # extract date portion

+ group_by(start_date,keyword) %>%

+ summarize(spend_lower = sum(spend_lower),

+ spend_upper = sum(spend_upper)) %>%

+ ungroup() %>%

+ rowwise() %>%

+ mutate(spend_mid = median(c(spend_lower, spend_upper))) %>%

+ filter(start_date >= as.Date("2025-02-03")) %>%

+ ggplot(aes(x = start_date, y = spend_mid, color = keyword)) +

+ geom_ribbon(aes(ymin = spend_lower, ymax = spend_upper), alpha = 0.1, linetype = "blank") +

+ geom_line() +

+ labs(x = "Date", y = "Daily Ad Spending in Euro",

+ title = "Daily Spending on Ads in Ad Library (\"climate\" query in Germany)") +

+ theme_minimal() +

+ scale_color_manual(values = c( "darkgreen")) +

+ theme(legend.position = "bottom")

+```

+

+

+

+

+#### Who is advertising on the climate topic?

+

+Another common analysis is to identify which organizations or pages are

+running the most ads in your data. We can easily rank advertisers by the

+number of ads:

+

+``` r

+climate_ads %>%

+ group_by(page_name) %>%

+ dplyr::summarize(spend_upper = sum(spend_upper)) %>%

+ ungroup() %>%

+ arrange(desc(spend_upper)) %>%

+ slice(1:10) %>%

+ mutate(page_name =fct_reorder(page_name, spend_upper)) %>%

+ ggplot(aes(x = page_name, y = spend_upper)) +

+ geom_col(fill="darkgray") +

+ coord_flip() + # flip for horizontal bars (easier to read names)

+ labs(x = "Page Name", y = "Upper Spending Boundary",

+ title = "Top 20 Advertisers in \"Climate\" Ad Dataset") +

+ theme_minimal()

+```

+

+

+

+

+Given that search terms sometimes are a bit unpredictable and don’t

+always work as expected, we can also query the top 10 advertisers based

+on spending. We can do so by retrieving the spending reports from Meta,

+conveniently archived by the

+[`metatargetr`](www.github.com/favstats/metatargetr) package. For a full tutorial on `metatargetr` and its capabilities [see this tutorial](https://data-knowledge-hub.com/docs/data-analysis/04_05_metatargetr).

+

+``` r

+spending_report <- get_report_db("DE", timeframe = 30, ds = "2025-02-23")

+

+national_parties <- spending_report %>%

+ filter(page_name %in% c("Die Linke", "SPD", "BÜNDNIS 90/DIE GRÜNEN", "FDP", "CDU", "AfD"))

+

+# Build an Ad Library API query for ads related to "climate" in Germany during 2025 election

+query <- adlib_build_query(

+ ad_reached_countries = "DE", # country where ads were delivered

+ ad_delivery_date_min = "2025-02-03", # specify minimum date: 21 days before election day

+ ad_delivery_date_max = "2025-02-23", # specify maximum date: election day

+ ad_active_status = "ALL", # include both active and inactive ads

+ search_page_ids = national_parties$page_id, # search page IDs, up to 10 at once

+ ad_type = "POLITICAL_AND_ISSUE_ADS", # restrict to political/issue ads

+ fields = ad_fields, # data fields we want

+ limit = 200 # number of results per page (max 1000)

+)

+

+top_ads <- paginate_meta_api(query, token, max_pages = 100, verbose = TRUE, api_health = TRUE)

+```

+

+We are going to visualize some of the text included inside the ad data

+by creating a *chatter plot*. For that, we also need some additional

+packages listed below.

+

+``` r

+pak::pak("tidytext")

+pak::pak("stopwords")

+pak::pak("ggrepel")

+```

+

+``` r

+# Define party colors

+party_colors <- c(

+ "Die Linke" = "#BE3075",

+ "SPD" = "#E3000F",

+ "BÜNDNIS 90/DIE GRÜNEN" = "#64A12D",

+ "FDP" = "#FFED00",

+ "CDU" = "#000000",

+ "AfD" = "#009EE0"

+)

+# Tokenize ad texts and count word frequencies

+top_ads %>%

+ unnest(ad_creative_bodies) %>%

+ tidytext::unnest_tokens(word, ad_creative_bodies) %>%

+ filter(!is.na(word)) %>%

+ anti_join(tibble(word = stopwords::stopwords("de")), by = "word") %>%

+ # Select top 30 words per party

+ count(page_name, word, sort = TRUE) %>%

+ group_by(page_name) %>%

+ top_n(30, n) %>%

+ ungroup() %>%

+ mutate(page_name = fct_relevel(page_name,

+ c("Die Linke", "SPD",

+ "BÜNDNIS 90/DIE GRÜNEN",

+ "FDP", "CDU", "AfD"))) %>%

+ # Create the chatter plot

+ ggplot(aes(x = page_name, y = n, label = word, color = page_name)) +

+ # geom_point(alpha = 0.7) +

+ ggrepel::geom_text_repel(

+ force = 5,

+ box.padding = 0.1,

+ max.overlaps = Inf,

+ segment.color = NA, # This removes the lines

+ size = 3

+ ) +

+ labs(

+ x = "Political Party (Left to Right)",

+ y = "Word Frequency",

+ title = "Common Words in Political Ads by Party"

+ ) +

+ scale_color_manual(values = party_colors) +

+ theme_minimal() +

+ scale_y_log10() +

+ theme(legend.position = "none")

+```

+

+

+

+This chatter plot visualizes the most frequent words found in the ads of

+Germany’s major political parties. The parties are arranged along the

+x-axis according to their position on the political spectrum, from left

+to right. The y-axis represents the frequency of each word on a

+logarithmic scale, which helps visualize words with a wide range of

+frequencies. This type of analysis allows us to quickly grasp the key

+themes and messaging priorities for each party. For instance, we can

+observe which topics are unique to certain parties and which are shared

+across the political landscape, providing insights into their campaign

+strategies and focus areas.

+

+### Step 9: Examining Demographic Distributions

+

+One aspect of the Ad Library data is the audience distribution for each

+ad. We requested demographic_distribution in our query, which for each

+ad includes the percentage of impressions by age bracket and gender.

+This data is returned as a nested list-column in our queried dataset. To analyze

+it, we need to unnest that list into a usable table.

+

+We can use `tidyr::unnest()` to expand the demographic distribution:

+

+``` r

+# Unnest demographic distribution into a long format data frame

+demo_df <- top_ads %>%

+ select(id, page_name, demographic_distribution, page_name) %>% # focus on relevant columns

+ unnest(demographic_distribution)

+

+head(demo_df)

+```

+```

+ ## # A tibble: 6 × 5

+ ## id page_name percentage age gender

+ ##

+ ## 1 2124558957977402 SPD 0.00029 18-24 female

+ ## 2 2124558957977402 SPD 0.00159 18-24 male

+ ## 3 2124558957977402 SPD 0.00391 25-34 female

+ ## 4 2124558957977402 SPD 0.00985 25-34 male

+ ## 5 2124558957977402 SPD 0.0136 35-44 female

+ ## 6 2124558957977402 SPD 0.0265 35-44 male

+```

+

+After unnesting, `demo_df` will have one row per demographic category

+per ad. It should include the columns: id (ad id), page_name, age,

+gender, and percentage. Each row might say, for example, ad X – age

+18-24 – female – 0.2 (meaning 20% of ad X’s impressions were shown to

+women aged 18-24). The percentages for a given ad across all age/gender

+categories sum up to 100%.

+

+> Important: if an ad did not reach a particular demographic group, it may

+not have an entry for that group.

+

+Now, what can we learn from this? Here are a couple of insights we might

+extract:

+

+Which age groups are ads reaching most frequently? We can count how many

+ads reached each age group. For instance, how many ads reached any

+people in the 65+ category versus 18-24? If few ads have impressions in

+older age groups, that suggests advertisers either target younger users

+or simply fail to engage older audiences. Similarly, we could examine

+how many ads target women vs. men, or the average percentage of

+impressions to women vs. men.

+

+> *Note of caution:* A more robust analysis would weight the data by

+impressions or spending. Averaging percentages across ads without doing

+so treats a low-reach ad the same as a high-reach one. For simplicity,

+however, we proceed with the unweighted approach.

+

+For a quick view, we can calculate the overall gender split in relative

+impressions, assuming equal weight per ad (again, caution advised):

+

+``` r

+demo_df %>%

+ filter(age != "Unknown", gender != "unknown") %>%

+ group_by(page_name, age, gender) %>%

+ summarise(percentage = mean(percentage), .groups = "drop") %>%

+ mutate(percentage = ifelse(gender == "male", -percentage, percentage)) %>%

+ ggplot(aes(x = age, y = percentage, fill = gender)) +

+ geom_col(width = 0.8) +

+ coord_flip() +

+ facet_wrap(~page_name, ncol = 3) +

+ scale_y_continuous(labels = scales::percent_format(accuracy = 1)) +

+ labs(

+ x = "Age Group",

+ y = "Average Percentage of Impressions",

+ title = "Ad Audience Demographics by Party, Age, and Gender",

+ fill = "Gender"

+ ) +

+ theme_minimal() +

+ theme(legend.position = "bottom")

+```

+

+

+

+

+In the illustrative chart above, each bar shows how many ads had at

+least some impressions in that age group. We observe a trend where AfD

+reaches more younger men on average, whereas Die Linke is more likely to

+reach younger women. Keep in mind, this does not directly tell us the

+volume of impressions, just the distribution of reach. An ad with only a

+tiny fraction of impressions in 65+ would still count here. To truly

+measure impression share, one would need to aggregate the percentages

+weighted by each ad’s total impressions. Because the data only provides

+ranges for impressions, a rough approach could be to use the midpoint of

+each ad’s impression range as a weight. That level of detail is beyond

+our scope here, but it is something to consider for a more rigorous

+analysis.

+

+In summary, the demographic data allows us to see who is being reached

+by these ads. Advertisers’ choices (or the outcome of the delivery

+algorithm) become visible: Are they reaching young adults more than

+seniors? Are they targeting predominantly one gender? These insights are

+valuable for understanding the focus and targeting strategies of

+political campaigns.

+

+## Conclusion

+

+In this tutorial, we demonstrated a full workflow for accessing and

+analyzing Facebook and Instagram advertising data using R and the Meta

+Ad Library API. We covered everything from setting up access credentials

+and verifying identity, to using the `Radlibrary` package to query the

+API, and finally exploring the data with `tidyverse` tools and

+visualizations. We learned how to retrieve ads by keyword or advertiser,

+how to handle pagination and nested demographic data, and how to create

+basic insights like time trends and top advertisers.

+

+The Meta Ad Library API provides researchers to study political

+advertising and how public discourse is shaped through paid messages. As

+a next step, you might refine these examples: try querying a different

+issue or country, dive deeper into ad content with text analysis, fetch

+regional distributions to map out where ads are being seen, or correlate

+spending with specific topics. You may also [check out my other tutorial](https://data-knowledge-hub.com/docs/data-analysis/04_05_metatargetr) on `metatargetr` which adds additional features not present in the Ad Library API such as retrieval of ad library reports and exact spending on specific target audiences (including detailed and custom audiences).

+

+Happy researching – and may your analyses shed light on the world of

+online (political) ads!

diff --git a/docs/docs/04_data-analysis/04_05_metatargetr.mdx b/docs/docs/04_data-analysis/04_05_metatargetr.mdx

new file mode 100644

index 0000000..08e2dc6

--- /dev/null

+++ b/docs/docs/04_data-analysis/04_05_metatargetr.mdx

@@ -0,0 +1,645 @@

+---

+title: "Analyzing Ad Targeting Insights: An Introduction to the metatargetr R Package"

+---

+

+

+

+

+

+

+

+

+

+

+

+## Introduction

+

+What political messages are being sent to which audiences? By using

+digital advertising tools provided by social media platforms,

+advertisers can select which audiences they want to reach and are able

+to send different messages for each one of them. That is why

+understanding the use of target audiences is as crucial as knowing what

+is being advertised. While Meta’s official Ad Library API provides a

+range of data, its restrictive access requirements and limited targeting

+granularity often fall short of the needs of researchers and

+journalists. The `metatargetr` R package offers an alternative,

+unofficial route to this information, by retrieving and archiving ad

+data directly from Meta’s public-facing Ad Library.

+

+This tutorial focuses on how to use `metatargetr` to retrieve and, most

+importantly, analyze this targeting data. Unlike the official Meta Ad

+Library API, which provides data on an ad-by-ad basis with broad

+spending ranges, `metatargetr` offers a unique, advertiser-level

+perspective.

+

+Here are the key advantages over Meta’s official Ad Library API:

+

+- Advertiser-Level Data: Instead of looking at single ads, we get a

+ consolidated view of the entire targeting strategy of a Facebook page

+ or Instagram account.

+

+- Exact Spending per Criterion: The data provides the exact percentage

+ of a page’s total spend allocated to each specific targeting

+ criterion. This allows for precise analysis of budget allocation, a

+ feature not available through the official API.

+

+- Additional Audience Insights: The dataset goes beyond the demographic

+ targeting that is available via the API, as it reveals spending on

+ powerful tools like Detailed Targeting (e.g. interest profiles that

+ Meta categorizes its users in), Custom Audiences (e.g., targeting a

+ list of existing customers) and Lookalike Audiences (targeting users

+ similar to an existing audience). Furthermore, while only demographic

+ targeting (age, gender, location) is available for countries in the EU

+ since the implementation of the Digital Services Act (DSA),

+ `metatargetr` provides targeting insights for all advertising pages on

+ Meta in the world.

+

+This tutorial will walk you through installing the package, retrieving

+historical targeting data, and using tidyverse tools to create

+compelling visualizations that reveal these hidden strategies.

+

+Finally, it is important to note that `metatargetr` relies on web

+scraping. This means it can be susceptible to changes in Facebook’s

+website structure. While powerful, it should be considered a

+complementary tool to the official API. [For a guide on using the

+official Meta Ad Library API](https://data-knowledge-hub.com/docs/data-collection/03_00_platform-specific%20guidelines/03_04_data-collection_meta_ads), please see the other tutorial in this series.

+

+### Installation

+

+First, you will need to install `metatargetr` and a few other helpful

+packages from the tidyverse ecosystem. Since `metatargetr` is hosted on

+GitHub, we will use the pak package for a smooth installation process.

+

+``` r

+# Install pak if you don't have it already

+if (!require("pak")) install.packages("pak")

+

+

+# Install `metatargetr` and other useful packages

+pak::pak(c(

+ "favstats/metatargetr",

+ "tidyverse",

+ "lubridate",

+ "scales"

+))

+```

+

+Now loading in the R packages:

+

+``` r

+library(metatargetr)

+library(tidyverse)

+library(lubridate)

+library(scales)

+```

+

+### Retrieving Targeting Information for Recent Ads (`get_targeting`)

+

+The core function for fetching live targeting data is `get_targeting()`.

+This function scrapes the “Audience” tab of a Page’s Ad Library section,

+providing insights into the age, gender, location but also custom and

+lookalike audience targeting of their *recent ads* (i.e. only for the

+last 7, 30, and 90 days).

+

+Let us retrieve the targeting data for a specific Facebook Page. You

+will need the Page ID, which can be found in the URL of the page’s Ad

+Library entry or in the [Meta Ad Library

+Report](https://www.facebook.com/ads/library/report/) (which you can

+also query via `metatargetr` using `get_ad_report`).

+

+For example, here is the URL of the Ad Library page of the U.S.

+Department of Homeland Security (DHS):

+

+> https://www.facebook.com/ads/library/?view_all_page_id=179587888720522

+

+The Page ID is the number after `view_all_page_id=` → **`179587888720522`**.

+

+Here are the most important function parameters:

+

+- `id`: A character string representing the (Facebook) Page ID.

+

+- `timeframe`: The time period for the data. Options are “LAST_7_DAYS”,

+ “LAST_30_DAYS”, or “LAST_90_DAYS”. The default is set at

+ “LAST_30_DAYS”.

+

+Let us retrieve the targeting info from a page that is currently

+conducting large digital ad campaign, the U.S. Department of Homeland

+Security:

+

+``` r

+# Fetch targeting data for a specific page for the last 30 days

+dhs_targeting_data <- get_targeting(id = "179587888720522", timeframe = "LAST_30_DAYS")

+

+# Inspect the structure of the returned data

+glimpse(dhs_targeting_data)

+```

+```

+ ## Rows: 91

+ ## Columns: 15

+ ## $ value "All", "Women", "Men", "Spanish", "Boston (Manch…

+ ## $ num_ads 15, 0, 0, 15, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1…

+ ## $ total_spend_pct 1.00000000, 0.00000000, 0.00000000, 1.00000000, …

+ ## $ type "gender", "gender", "gender", "language", "locat…

+ ## $ location_type NA, NA, NA, NA, "geo_markets", "COUNTY", "BOROUG…

+ ## $ num_obfuscated NA, NA, NA, NA, 0, 0, 0, 0, 0, 0, 1, 0, 0, 0, 0,…

+ ## $ is_exclusion NA, NA, NA, NA, FALSE, FALSE, FALSE, FALSE, FALS…

+ ## $ detailed_type NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, …

+ ## $ ds "2025-07-24", "2025-07-24", "2025-07-24", "2025-…

+ ## $ main_currency "USD", "USD", "USD", "USD", "USD", "USD", "USD",…

+ ## $ total_num_ads 15, 15, 15, 15, 15, 15, 15, 15, 15, 15, 15, 15, …

+ ## $ total_spend_formatted "$347,094", "$347,094", "$347,094", "$347,094", …

+ ## $ is_30_day_available TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, …

+ ## $ is_90_day_available TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, …

+ ## $ page_id "179587888720522", "179587888720522", "179587888…

+```

+

+The output will be a tibble where each row represents a specific

+demographic or location targeting segment for the ads run by that page

+in the specified timeframe. Key columns include:

+

+- `value`: The exact targeting criterion used.

+

+- `type`: The category of targeting (e.g., “age”, “gender”, “location”,

+ etc.).

+

+- `total_spend_pct`: The proportion of the page’s ad spend directed at

+ a target audience (`value`) within the timeframe specified.

+

+- `total_spend_formatted`: The total ad spend of the page in the

+ timeframe specified.

+

+- `main_currency`: Information about the currency used by the page.

+

+### Historical Targeting Data at Scale (`get_targeting_db`)

+

+The `get_targeting()` function is enough for retrieving targeting info

+for specific pages that have run ads up to the last 90 days. However,

+once 90 days have passed, Meta *does not* provide access to this data

+anymore. This is where `metatargetr`’s true power lies: it retrieves,

+archives, and provides access to a vast repository of pre-collected

+targeting data for *all pages running political advertisements in the

+world*. This data can be accessed through the `get_targeting_db()`

+function, which downloads historical datasets have been collected since

+late 2023.

+

+Here are the most important function parameters:

+

+- `the_cntry`: The two-letter ISO country code for the desired dataset

+ (e.g., “DE”, “US”).

+

+- `tf`: The timeframe in days (“LAST_7_DAYS”, “LAST_30_DAYS”,

+ “LAST_90_DAYS”).

+

+- `ds`: A date string in “YYYY-MM-DD” format, identifying the date of

+ the specific archived dataset.

+

+A tip before you download a historical dataset: the

+`get_targeting_metadata()` function allows you to see the available

+dates for a given country and timeframe as the automated retrieval

+process established by the package may have missed a certain date for a

+specific country (or because Meta skipped them).

+

+``` r

+# Get metadata for 90-day timeframe datasets in the US

+us_90_day_metadata <- get_targeting_metadata(country_code = "US", timeframe = "90")

+

+# View the most recent available dates

+head(us_90_day_metadata)

+```

+```

+ ## # A tibble: 6 × 3

+ ## cntry ds tframe

+ ##

+ ## 1 US 2025-07-24 last_90_days

+ ## 2 US 2025-07-23 last_90_days

+ ## 3 US 2025-07-22 last_90_days

+ ## 4 US 2025-07-21 last_90_days

+ ## 5 US 2025-07-20 last_90_days

+ ## 6 US 2025-07-18 last_90_days

+```

+

+Once you have identified a dataset you want, you can download it with

+`get_targeting_db()`. Note that the archived data is typically available

+with a delay of a few days. This function allows for powerful

+longitudinal analysis across thousands of advertisers.

+

+``` r

+# Download the US 90-day targeting dataset from a specific date in the past

+us_targeting_archive <- get_targeting_db(the_cntry = "US", tf = "90", ds = "2025-06-30")

+

+# Inspect the archived data

+# It contains the same structure as the live data, but for thousands of pages

+glimpse(us_targeting_archive)

+```

+```

+ ## Rows: 690,219

+ ## Columns: 37

+ ## $ internal_id NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ no_data NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ tstamp 2025-07-04 05:30:28, 2025-07-04 05:30:28, 20…

+ ## $ page_id "761832453834971", "761832453834971", "761832…

+ ## $ cntry "US", "US", "US", "US", "US", "US", "US", "US…

+ ## $ page_name "League of Independent Voters of Texas", "Lea…

+ ## $ partyfacts_id NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ sources NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ country NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ party NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ left_right NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ tags NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, …

+ ## $ tags_ideology NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ disclaimer "LEAGUE OF INDEPENDENT VOTERS OF TEXAS", "LEA…

+ ## $ amount_spent_usd "405", "405", "405", "405", "405", "405", "40…

+ ## $ number_of_ads_in_library "14", "14", "14", "14", "14", "14", "14", "14…

+ ## $ date "2025-06-29", "2025-06-29", "2025-06-29", "20…

+ ## $ path "extracted/FacebookAdLibraryReport_2025-06-29…

+ ## $ tf "last_90_days", "last_90_days", "last_90_days…

+ ## $ remove_em FALSE, FALSE, FALSE, FALSE, FALSE, FALSE, FAL…

+ ## $ total_n 35543, 35543, 35543, 35543, 35543, 35543, 355…

+ ## $ amount_spent 405, 405, 405, 405, 405, 405, 405, 405, 405, …

+ ## $ value "All", "Women", "Men", "Bastrop, TX, United S…

+ ## $ num_ads 14, 0, 0, 7, 1, 6, 1, 0, 0, 0, 0, 0, 14, 14, …

+ ## $ total_spend_pct 1.00000000, 0.00000000, 0.00000000, 0.6351905…

+ ## $ type "gender", "gender", "gender", "location", "lo…

+ ## $ location_type NA, NA, NA, "CITY", "CITY", "countries", "zip…

+ ## $ num_obfuscated NA, NA, NA, 2, 1, 0, 0, NA, NA, NA, NA, NA, N…

+ ## $ is_exclusion NA, NA, NA, FALSE, FALSE, FALSE, FALSE, NA, N…

+ ## $ custom_audience_type NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+ ## $ ds "2025-06-30", "2025-06-30", "2025-06-30", "20…

+ ## $ main_currency "USD", "USD", "USD", "USD", "USD", "USD", "US…

+ ## $ total_num_ads 14, 14, 14, 14, 14, 14, 14, 14, 14, 14, 14, 1…

+ ## $ total_spend_formatted 405, 405, 405, 405, 405, 405, 405, 405, 405, …

+ ## $ is_30_day_available TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRU…

+ ## $ is_90_day_available TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRUE, TRU…

+ ## $ detailed_type NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, N…

+```

+

+## Analyzing DHS Ad Campaigns 🏛️

+

+Now, let us apply what we have learned in a practical case study. We

+will analyze the ad campaigns of the U.S. Department of Homeland

+Security (DHS) to showcase how to combine historical data and visualize

+targeting strategies. In this case study we analyze audience segments

+derived from detailed targeting, how spend is allocated across those

+segments, the county-level geographic focus of the campaign, and which

+other advertisers use DHS-style proxy targeting. If you are interested

+in a similar analysis I have conducted for a recent blog post of mine,

+you can read the full post [here](https://www.favstats.eu/post/dhs/).

+

+

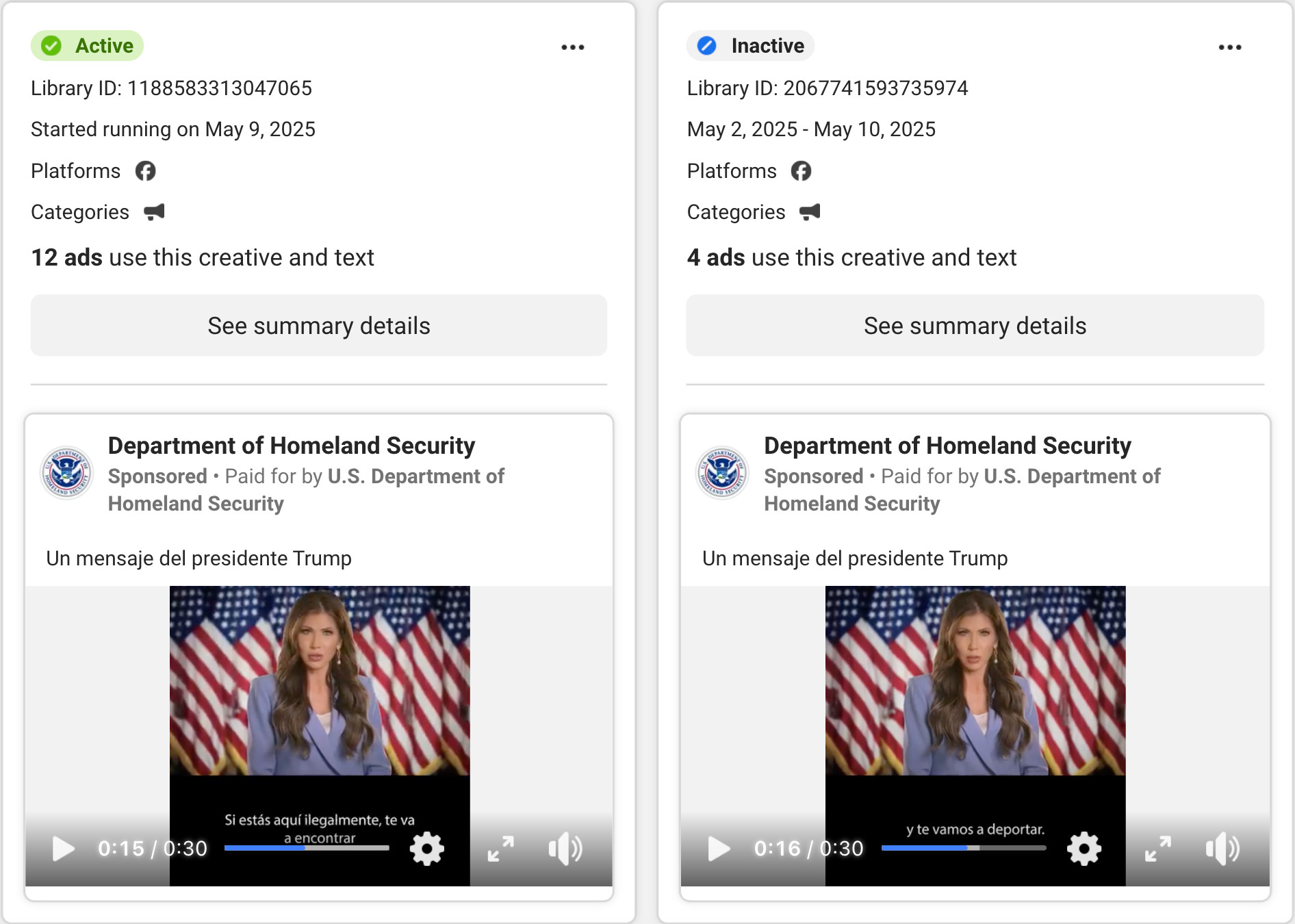

+Figure shows the ads that DHS runs which has DHS secretary Kristi Noem threaten "if you are here illegally we will find and you and deport you" with Spanish subtitles.

+

+First, we will use `get_targeting_db()` to download two historical

+snapshots of U.S. ad data. By combining data from different time points,

+we can create a more comprehensive picture of an advertiser’s strategy

+over a longer period.

+

+

+### Retrieving and Combining Data

+

+``` r

+# Download two datasets from different dates

+targeting_jun <- get_targeting_db(the_cntry = "US", tf = "90", ds = "2025-06-30")

+targeting_apr <- get_targeting_db(the_cntry = "US", tf = "90", ds = "2025-04-01")

+

+# Combine the datasets and filter for the DHS page

+# The DHS Facebook Page ID is "179587888720522"

+dhs_data_raw <- targeting_jun %>%

+ bind_rows(targeting_apr) %>%

+ filter(page_id == "179587888720522")

+```

+

+

+The raw data contains spending percentages relative to the total spend

+at the time of each snapshot. To get an accurate picture of the total

+spend over our *combined period*, we need to aggregate the data

+correctly. The helper function `aggr_targeting` recalculates the

+spending for each targeting criterion based on the new, combined total.

+

+``` r

+# Apply the function to our DHS data

+dhs_data_agg <- aggr_targeting(dhs_data_raw)

+```

+

+> Note: the `aggr_targeting()` function creates a variable called

+> `spend_per` which shows the spend per targeting criterion

+> across the combined period. If you pull raw data with `get_targeting_db()`, you have to compute this measure

+> yourself as *total spending* (`total_spend_formatted`) *×* *share of spending*

+> (`total_spend_pct`).

+

+

+### Visualizing "Detailed" Targeting Criteria

+

+One of the most powerful features of this dataset is the ability to see

+the specific interests and behaviors an advertiser targets – data that

+is not available via the typical API. Let us visualize what portion of

+the DHS budget was spent on these “detailed” targeting criteria.

+

+

+``` r

+# Filter for "detailed" targeting types and plot the top categories

+dhs_data_agg %>%

+ filter(type == "detailed") %>%

+ # For better labels, combine the type and value

+ mutate(

+ display_value = str_wrap(paste0(str_to_title(detailed_type), ": ", value), width = 40)

+ ) %>%

+ # Keep the top 10 criteria by spend

+ slice_max(order_by = spend_per, n = 10) %>%

+ # Create the plot

+ ggplot(aes(x = spend_per, y = fct_reorder(display_value, spend_per))) +

+ geom_col(fill = "#003366") +

+ geom_text(

+ aes(label = dollar(spend_per, accuracy = 1)),

+ hjust = -0.1,

+ size = 3.5,

+ fontface = "bold"

+ ) +

+ scale_x_continuous(

+ labels = label_dollar(),

+ expand = expansion(mult = c(0, 0.15))

+ ) +

+ labs(

+ title = "DHS Detailed Targeting: Top Spending Categories",

+ subtitle = "Spend on interests, behaviors, and demographic categories on Meta's platforms.",

+ x = "Estimated Ad Spend (USD)",

+ y = NULL,

+ caption = "Data Source: Meta Ad Library via metatargetr"

+ ) +

+ theme_minimal(base_size = 14) +

+ theme(

+ panel.grid.major.y = element_blank(),

+ plot.title.position = "plot"

+ )

+```

+

+

+

+

+

+This chart clearly shows the specific audience segments the DHS

+prioritized. We can see a significant focus on users who are interested

+in Mexican culture events and music, and whose language is set to

+Spanish. This provides concrete, data-driven insights into their

+campaign strategy that would be difficult to obtain otherwise.

+

+> Note: When multiple targeting criteria show identical spend, that likely indicates they were applied together on the same underlying ads. Because the data that is retrieved is aggregated at the advertiser level it is hard to isolate ads that ran simultaneously. For a better measurement of spend we could divide the total spending by the number of targeting criteria that have the same data (same number of ads and spending) by assuming that spending was divided about equally to each targeting criterion.

+

+

+### Visualizing Location Targeting Criteria

+

+Next, let us analyze where the money was spent. We can filter the data

+for location targeting and visualize the top-spending counties. This

+reveals the geographic focus of the campaigns.

+

+``` r

+# Filter for county-level location targeting and plot the top 15

+dhs_data_agg %>%

+ filter(location_type == "COUNTY") %>%

+ slice_max(order_by = spend_per, n = 15) %>%

+ mutate(

+ location_label = str_remove(value, ", United States")

+ ) %>%

+ ggplot(aes(x = spend_per, y = fct_reorder(location_label, spend_per))) +

+ geom_col(fill = "#c00000") +

+ geom_text(

+ aes(label = dollar(spend_per, accuracy = 1)),

+ hjust = -0.1,

+ size = 3.5,

+ fontface = "bold"

+ ) +

+ scale_x_continuous(

+ labels = label_dollar(),

+ expand = expansion(mult = c(0, 0.15))

+ ) +

+ labs(

+ title = "Top 15 US Counties by DHS Ad Spend",

+ subtitle = "Estimated ad spending by the Department of Homeland Security on Meta's platforms.",

+ x = "Estimated Ad Spend (USD)",

+ y = NULL,

+ caption = "Data Source: Meta Ad Library via metatargetr"

+ ) +

+ theme_minimal(base_size = 14) +

+ theme(

+ panel.grid.major.y = element_blank(),

+ plot.title.position = "plot"

+ )

+```

+

+

+

+This visualization instantly reveals the geographic focus of the DHS’s

+advertising efforts. The spending is heavily concentrated in major

+metropolitan area, particularly in states like California, Texas, New

+York, and Florida with a large share of Latino citizen. This kind of

+analysis is invaluable for understanding the geographic scope and

+strategy of public information campaigns.

+

+### Visualizing Top 10 Spenders using Ethnic Proxies

+

+

+> Who else is using targeting these targeting criteria that DHS utilizes

+> as proxies to reach the Latino community?

+

+First, the detailed targeting criteria used by the DHS ads are filtered,

+and *all* of the data is aggregated.

+

+``` r

+latino_targeting <- dhs_data_agg %>%

+ filter(type == "detailed") %>%

+ select(value, type) %>%

+ ## lets remove friends of football fans as that is not related by itself

+ filter(value != "Friends of football fans")

+

+us_aggr <- targeting_jun %>%

+ bind_rows(targeting_apr) %>%

+ inner_join(latino_targeting) %>%

+ aggr_targeting()

+```

+

+Next, I am focusing only on the top 10 spenders that have used these

+targeting categories.

+

+``` r

+top_10_data_for_plot <- us_aggr %>%

+ distinct(page_id, total_spend) %>%

+ arrange(desc(total_spend)) %>%

+ slice(1:10) %>% select(page_id)

+```

+

+Finally, we can put everything together and reveal who the top spenders

+are:

+

+``` r

+us_aggr %>%

+ inner_join(top_10_data_for_plot) %>%

+ mutate(page_name = fct_reorder(page_name, spend_per)) %>%

+ggplot(aes(x = page_name, y = spend_per)) +

+ geom_boxplot() + # geom_col is better for this; position="stack" is default

+ scale_y_continuous(

+ labels = dollar_format(prefix = "$", scale = 1/1000, suffix = "k"),

+ expand = c(0, 0) # Make the bars start at the y-axis

+ ) +

+ labs(

+ title = "Top 10 Advertisers Using DHS's 'Latino Proxy' Targeting",

+ subtitle = "Breakdown of ad spend by detailed targeting criterion",

+ y = "Total Ad Spend (USD)",

+ x = NULL

+ ) +

+ theme_minimal(base_family = "sans") +

+ theme(

+ legend.position = "bottom",

+ panel.grid.major.y = element_blank(), # Cleaner look

+ panel.grid.minor.x = element_blank(),

+ axis.text.y = element_text(face = "bold")

+ ) +

+ coord_flip()

+```

+

+

+

+Beyond the Department of Homeland Security, the analysis reveals that

+the targeting criteria used as proxies for the Latino community are also

+employed by a diverse range of other major advertisers. The top 10

+spenders using these methods include:

+

+- Public Health & Advocacy Groups: Organizations like Planned

+ Parenthood, Tobacco Free Florida, and The California Endowment use

+ this targeting for outreach and awareness campaigns.

+

+- Non-Profits and Charities: Pages such as Catholic Relief Services,

+ Operation Smile, and The International Fellowship of Christians and

+ Jews appear to use these criteria for fundraising and supporter

+ engagement.

+

+This confirms that while the DHS campaign is unique in its scale and

+messaging, the underlying methods for reaching the Latino community are

+common tools used by a wide array of organizations across the non-profit

+and public health sectors.

+

+## A Glimpse into Other `metatargetr` Features

+

+Beyond its core focus on targeting, `metatargetr` includes a suite of

+functions for retrieving other valuable types of data, enabling a more

+holistic analysis of digital advertising. Below is a very short showcase

+of them:

+

+### Facebook and Instagram Page Information

+

+To complement the targeting data, you can retrieve detailed metadata

+about Facebook pages, such as like counts, creation dates, and contact

+information, using `get_page_insights()`. For historical page

+information and from many pages at once, the package also offers

+`get_page_info_db()`.

+

+``` r

+dhs_page_info <- get_page_insights(pageid = "179587888720522", include_info = "page_info")

+

+glimpse(dhs_page_info)

+```

+```

+ ## Rows: 1

+ ## Columns: 24

+ ## $ page_name "Department of Homeland Security"

+ ## $ is_profile_page "FALSE"

+ ## $ page_is_deleted "FALSE"

+ ## $ page_is_restricted "FALSE"

+ ## $ has_blank_ads "FALSE"

+ ## $ hidden_ads "0"

+ ## $ page_profile_uri "https://facebook.com/homelandsecurity"

+ ## $ page_id "179587888720522"

+ ## $ page_verification "BLUE_VERIFIED"

+ ## $ entity_type "PERSON_PROFILE"

+ ## $ page_alias "homelandsecurity"

+ ## $ likes "1011008"

+ ## $ page_category "Government organisation"

+ ## $ ig_verification "TRUE"

+ ## $ ig_username "dhsgov"

+ ## $ ig_followers "313631"

+ ## $ shared_disclaimer_info "[]"

+ ## $ about "Official Facebook page of the U.S. Department …

+ ## $ event "CREATION: 2010-12-01 20:39:04"

+ ## $ city "Washington"

+ ## $ country "United States of America"

+ ## $ postal_code "20528"

+ ## $ state "DC"

+ ## $ phone_number "+12022817828"

+```

+

+### Google Ad Data

+

+The package is not limited to Meta. While outside the scope of this

+tutorial, functions exist to retrieve spending data from Google’s Ad

+Library, allowing for powerful cross-platform comparisons.

+

+For example, the `get_ggl_ads` function can get different tables that

+are provided by the Google Ad Transparency report, such as statistics on

+weekly spending per advertiser:

+

+``` r

+get_ggl_ads("weekly_spend") %>%

+ glimpse()

+```

+

+```

+ ## ℹ Downloading data bundle from Google... (This may take a moment)

+

+ ## ✔ Download complete. Extracting files...

+

+ ## ℹ Reading data from 'google-political-ads-advertiser-weekly-spend.csv'...

+

+ ## indexing google-political-ads-advertiser-weekly-spend.csv [] 963.60GB/s, eta: 0s indexing google-political-ads-advertiser-weekly-spend.csv [=] 1.09GB/s, eta: 0s

+

+ ## ✔ Processing complete.

+

+ ## Rows: 296,883

+ ## Columns: 24

+ ## $ Advertiser_ID "AR00000475401340059649", "AR00000475401340059649", "A…

+ ## $ Advertiser_Name "Vince Leach for Senate", "Vince Leach for Senate", "V…

+ ## $ Election_Cycle NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA, NA…

+ ## $ Week_Start_Date 2020-08-23, 2020-08-30, 2020-09-06, 2020-09-13, 2020-…

+ ## $ Spend_USD 400, 500, 400, 400, 200, 400, 400, 300, 300, 300, 200,…

+ ## $ Spend_EUR 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_INR 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_BGN 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_CZK 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_DKK 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_HUF 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_PLN 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_RON 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_SEK 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_GBP 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_NZD 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_ILS 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_AUD 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_TWD 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_BRL 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_ARS 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_ZAR 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_CLP 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+ ## $ Spend_MXN 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, …

+```

+

+These functions transform `metatargetr` from a simple targeting tool

+into a comprehensive ad intelligence package.

+

+## Conclusion

+

+The `metatargetr` package provides a powerful and accessible toolkit for

+researchers, journalists, and analysts looking to explore the nuances of

+digital advertising on digital platforms. As this tutorial has shown, by

+fetching and aggregating historical data, you can quickly move from raw

+numbers to compelling visualizations that uncover the strategic

+decisions behind major ad campaigns.

+

+By offering a direct line to targeting data, both live and historical,

+and equipping users with the tools to analyze it effectively,

+`metatargetr` opens new avenues for understanding campaign strategies

+and their societal impact. Happy researching!

diff --git a/docs/src/components/AuthorCard.js b/docs/src/components/AuthorCard.js

index 7020040..dc915d2 100644

--- a/docs/src/components/AuthorCard.js

+++ b/docs/src/components/AuthorCard.js

@@ -1,6 +1,6 @@

import React from "react";

-const AuthorCard = ({ name, avatar, avatarSrc, position, website, twitter, scholar, mastodon, linkedin }) => (

+const AuthorCard = ({ name, avatar, avatarSrc, position, website, twitter, scholar, mastodon, linkedin, bluesky }) => (

// make card with author information

diff --git a/docs/static/img/analysis/metatargetr/unnamed-chunk-10-1.png b/docs/static/img/analysis/metatargetr/unnamed-chunk-10-1.png

new file mode 100644

index 0000000..6a339fa

Binary files /dev/null and b/docs/static/img/analysis/metatargetr/unnamed-chunk-10-1.png differ

diff --git a/docs/static/img/analysis/metatargetr/unnamed-chunk-11-1.png b/docs/static/img/analysis/metatargetr/unnamed-chunk-11-1.png

new file mode 100644

index 0000000..d0774ab

Binary files /dev/null and b/docs/static/img/analysis/metatargetr/unnamed-chunk-11-1.png differ

diff --git a/docs/static/img/analysis/metatargetr/unnamed-chunk-14-1.png b/docs/static/img/analysis/metatargetr/unnamed-chunk-14-1.png

new file mode 100644

index 0000000..bb1abe4

Binary files /dev/null and b/docs/static/img/analysis/metatargetr/unnamed-chunk-14-1.png differ

diff --git a/docs/static/img/contributors/votta.jpg b/docs/static/img/contributors/votta.jpg

new file mode 100644

index 0000000..3c94f17

Binary files /dev/null and b/docs/static/img/contributors/votta.jpg differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/signup.png b/docs/static/img/platforms/data-collection-meta-ads/signup.png

new file mode 100644

index 0000000..0cddc8c

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/signup.png differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-15-1.png b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-15-1.png

new file mode 100644

index 0000000..6bb44c1

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-15-1.png differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-16-1.png b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-16-1.png

new file mode 100644

index 0000000..553a0e0

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-16-1.png differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-17-1.png b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-17-1.png

new file mode 100644

index 0000000..bb6e855

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-17-1.png differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-18-1.png b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-18-1.png

new file mode 100644

index 0000000..e182945

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-18-1.png differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-22-1.png b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-22-1.png

new file mode 100644

index 0000000..3d0d5f1

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-22-1.png differ

diff --git a/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-24-1.png b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-24-1.png

new file mode 100644

index 0000000..fe17028

Binary files /dev/null and b/docs/static/img/platforms/data-collection-meta-ads/unnamed-chunk-24-1.png differ