🌐 Project Page • 📄 Paper • 🤗 Huggingface

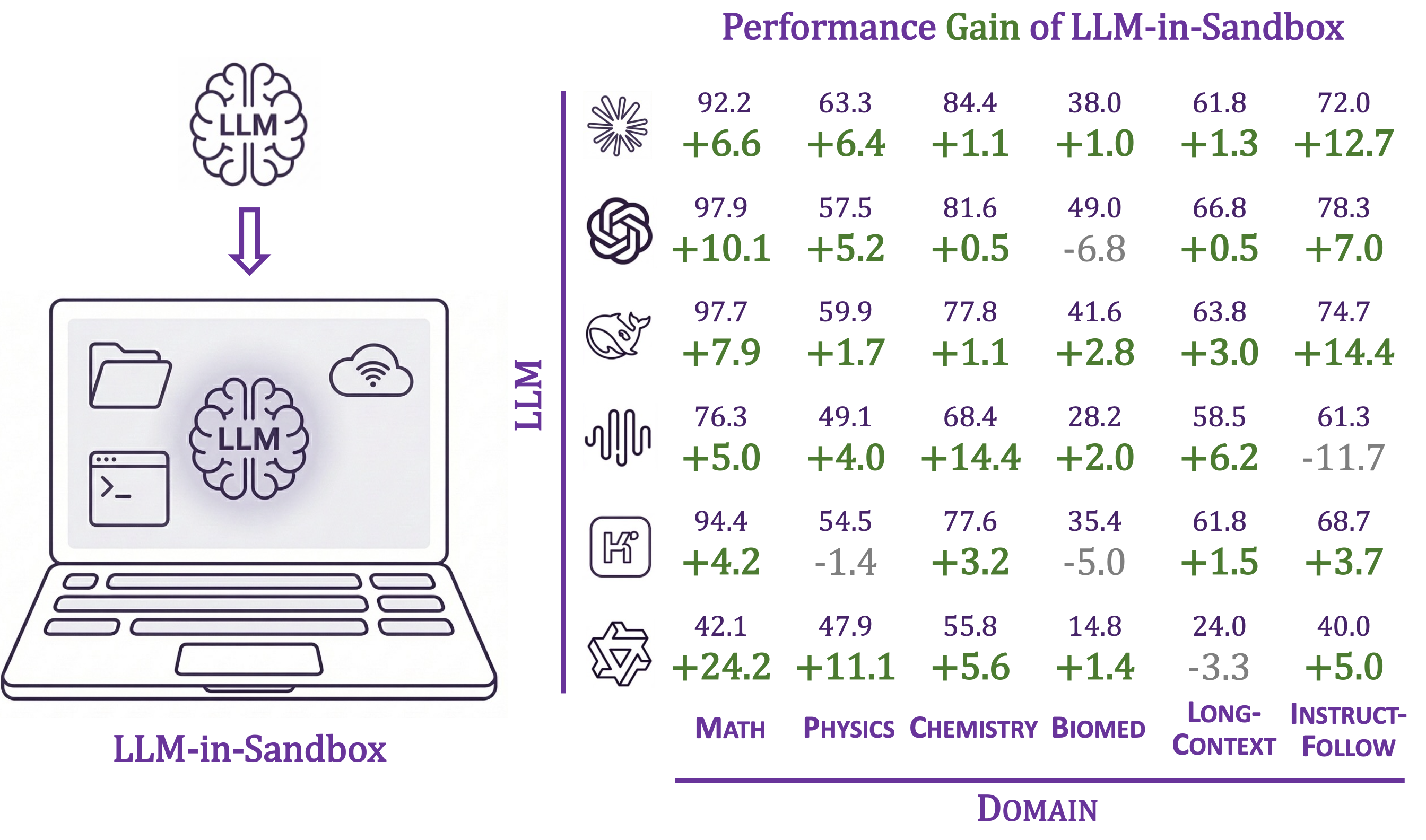

Enabling LLMs to explore within a code sandbox (i.e., a virtual computer) to elicit general agentic intelligence.

Features:

- 🌍 General-purpose: works beyond coding—scientific reasoning, long-cotext understanding, video production, travel planning, and more

- 🐳 Isolated execution environment via Docker containers

- 🔌 Compatible with OpenAI, Anthropic, and self-hosted servers (vLLM, SGLang, etc.)

- 📁 Flexible I/O: mount any input files, export any output files

Requirements: Python 3.10+, Docker

pip install llm-in-sandboxOr install from source:

git clone https://github.com/llm-in-sandbox/llm-in-sandbox.git

cd llm-in-sandbox

pip install -e .Docker Image

The default Docker image (cdx123/llm-in-sandbox:v0.1) will be automatically pulled when you first run the agent. The first run may take a minute to download the image (~400MB), but subsequent runs will start instantly.

Advanced: Build your own image

Modify Dockerfile and build your own image:

llm-in-sandbox build

# Then use: --docker_image llm-in-sandbox:v0.1

[](https://ko-fi.com/N4N71WOHZ3)LLM-in-Sandbox works with various LLM providers including OpenAI, Anthropic, and self-hosted servers (vLLM, SGLang, etc.).

llm-in-sandbox run \

--query "write a hello world in python" \

--llm_name "openai/gpt-5" \

--llm_base_url "http://your-api-server/v1" \

--api_key "your-api-key"Using local vLLM server for Qwen3-Coder-30B-A3B-Instruct

1. Start vLLM server:

vllm serve Qwen/Qwen3-Coder-30B-A3B-Instruct \

--served-model-name qwen3_coder \

--enable-auto-tool-choice \

--tool-call-parser qwen3_coder \

--tensor-parallel-size 82. Run agent (in a new terminal once server is ready):

llm-in-sandbox run \

--query "write a hello world in python" \

--llm_name qwen3_coder \

--llm_base_url "http://localhost:8000/v1" \

--temperature 0.7Using local SGLang server for DeepSeek-V3.2-Thinking

1. Start sgLang server:

python3 -m sglang.launch_server \

--model-path "deepseek-ai/DeepSeek-V3.2" \

--served-model-name "DeepSeek-V3.2" \

--trust-remote-code \

--tp-size 8 \

--tool-call-parser deepseekv32 \

--reasoning-parser deepseek-v3 \

--host 0.0.0.0 \

--port 56782. Run agent (in a new terminal once server is ready):

llm-in-sandbox run \

--query "write a hello world in python" \

--llm_name DeepSeek-V3.2 \

--llm_base_url "http://0.0.0.0:5678/v1" \

--extra_body '{"chat_template_kwargs": {"thinking": True}}'| Parameter | Description | Default |

|---|---|---|

--query |

Task for the agent | required |

--llm_name |

Model name | required |

--llm_base_url |

API endpoint URL | from LLM_BASE_URL env var |

--api_key |

API key (not needed for local server) | from OPENAI_API_KEY env var |

--input_dir |

Input files folder to mount (Optional) | None |

--output_dir |

Output folder for results | ./output |

--docker_image |

Docker image to use | cdx123/llm-in-sandbox:v0.1 |

--prompt_config |

Path to prompt template | ./config/general.yaml |

--temperature |

Sampling temperature | 1.0 |

--max_steps |

Max conversation turns | 100 |

--extra_body |

Extra JSON body for LLM API calls | None |

Run llm-in-sandbox run --help for all available parameters.

Each run creates a timestamped folder:

output/2026-01-16_14-30-00/

├── files/

│ ├── answer.txt # Final answer

│ └── hello_world.py # Output file

└── trajectory.json # Execution history

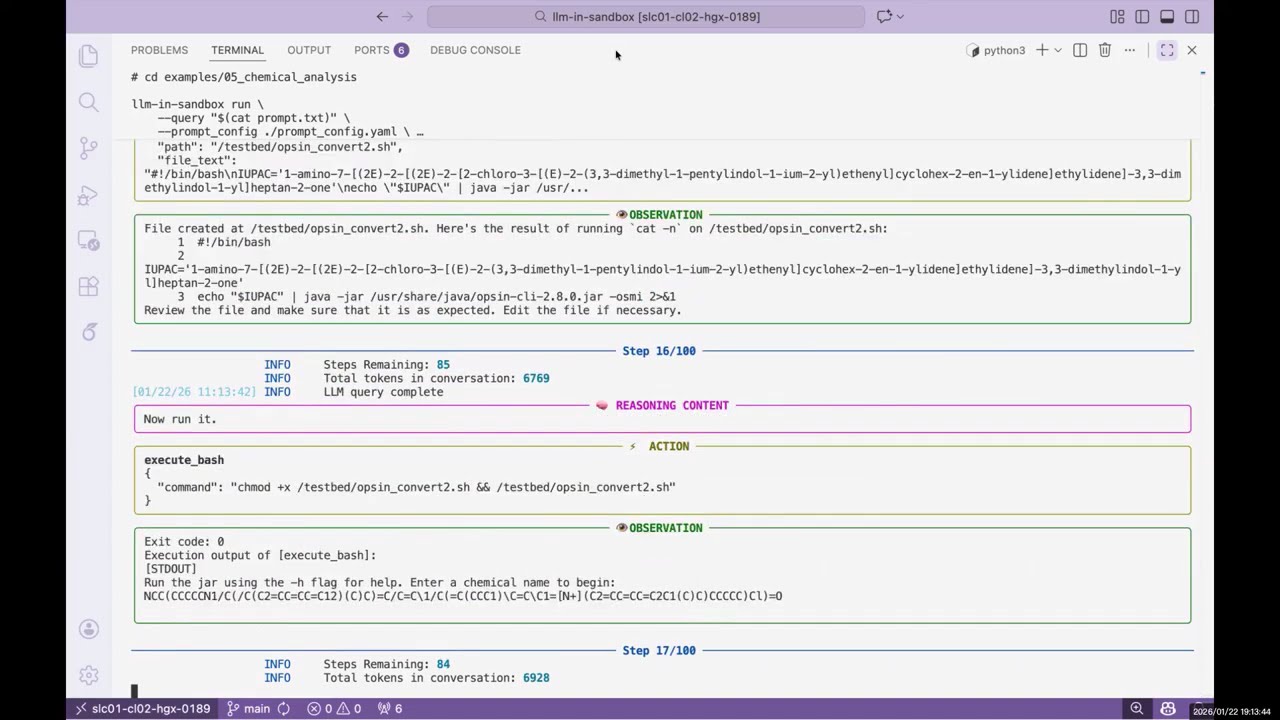

We provide examples across diverse non-coding domains: scientific reasoning, long-cotext understanding, instruction following, travel planning, video production, music composition, poster design, and more.

👉 See examples/README.md for the full list.

Daixuan Cheng: daixuancheng6@gmail.com

Shaohan Huang: shaohanh@microsoft.com

We learned the design and reused code from R2E-Gym. Thanks for the great work!

If you find our work helpful, please cite us:

@article{cheng2026llm,

title={LLM-in-Sandbox Elicits General Agentic Intelligence},

author={Cheng, Daixuan and Huang, Shaohan and Gu, Yuxian and Song, Huatong and Chen, Guoxin and Dong, Li and Zhao, Wayne Xin and Wen, Ji-Rong and Wei, Furu},

journal={arXiv preprint arXiv:2601.16206},

year={2026}

}