TL;DR

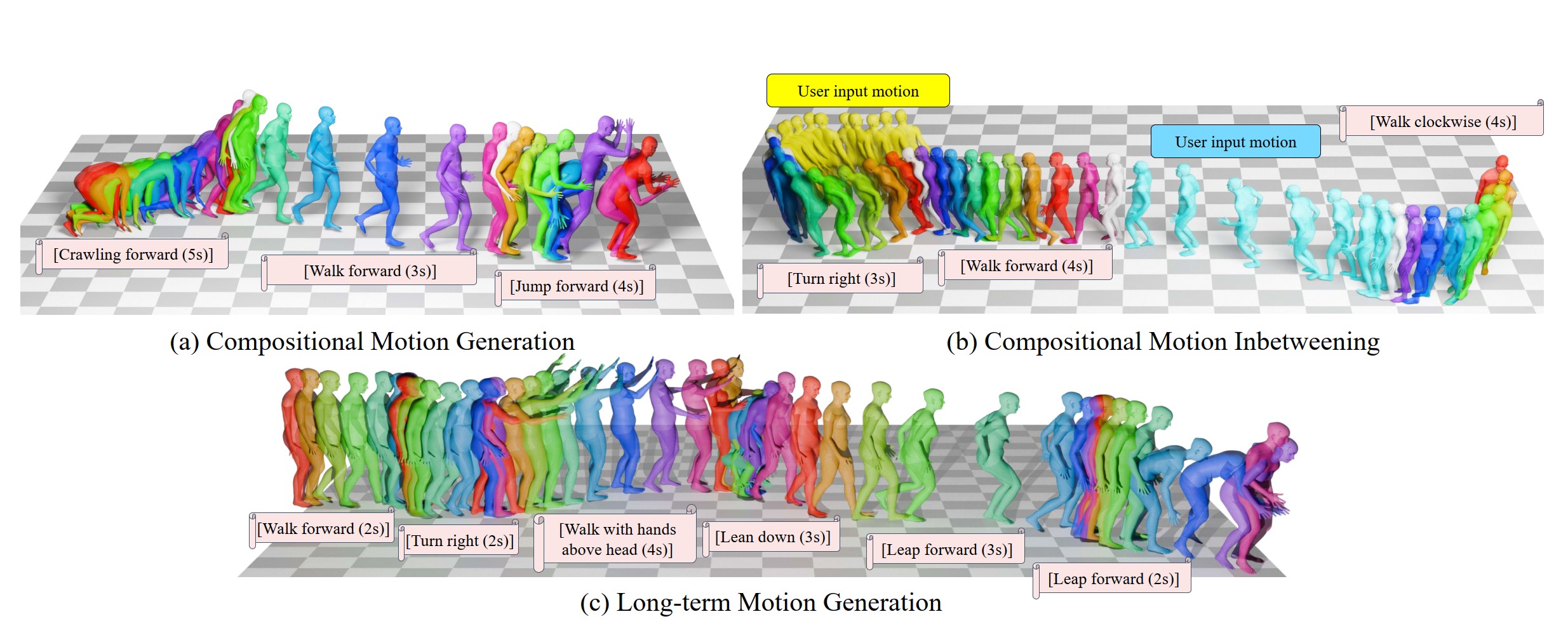

The proposed Compositional Phase Diffusion framework consistently generates semantically aligned multi-clip motion with smooth transitions by using latent-phase diffusion modules (SPDM and TPDM) to preserve phase continuity and enable inbetweening.

CLICK for full abstract

Recent research on motion generation has shown significant progress in generating semantically aligned motion with singular semantics. However, when employing these models to create composite sequences containing multiple semantically generated motion clips, they often struggle to preserve the continuity of motion dynamics at the transition boundaries between clips, resulting in awkward transitions and abrupt artifacts. To address these challenges, we present Compositional Phase Diffusion, which leverages the Semantic Phase Diffusion Module (SPDM) and Transitional Phase Diffusion Module (TPDM) to progressively incorporate semantic guidance and phase details from adjacent motion clips into the diffusion process. Specifically, SPDM and TPDM operate within the latent motion frequency domain established by the pre-trained Action-Centric Motion Phase Autoencoder (ACT-PAE). This allows them to learn semantically important and transition-aware phase information from variable-length motion clips during training. Experimental results demonstrate the competitive performance of our proposed framework in generating compositional motion sequences that align semantically with the input conditions, while preserving phase transitional continuity between preceding and succeeding motion clips. Additionally, motion inbetweening task is made possible by keeping the phase parameter of the input motion sequences fixed throughout the diffusion process, showcasing the potential for extending the proposed framework to accommodate various application scenarios.

If you find this work helpful in your research, please consider leaving a star ⭐️ and citing:

@inproceedings{au2025transphase,

title={Deep Compositional Phase Diffusion for Long Motion Sequence Generation},

author={Au, Ho Yin and Chen, Jie and Jiang, Junkun and Xiang, Jingyu},

year={2025},

booktitle={The Thirty-ninth Annual Conference on Neural Information Processing Systems}

}Please checkout our follow-up works if interested:

SOSControl - saliency-aware and precise control of body part orientation and motion timing in text-to-motion generation.

- ✅ Released model and dataloader code

- ✅ Released model checkpoints and demo script

- ✅ Released processed data along with training and testing instructions

- ✅ Released code for generating evaluation motion samples

- 🔄 Provide detailed instructions and setup for running data processing and evaluation scripts in the external repository

-

Clone the repository

git clone https://github.com/asdryau/TransPhase.git cd TransPhase -

Create a conda environment

conda create -n transphase python=3.9.13 conda activate transphase

-

Install dependencies

conda install pytorch==1.13.1 torchvision==0.14.1 torchaudio==0.13.1 pytorch-cuda=11.6 -c pytorch -c nvidia pip install -r requirements.txt

-

Download

- Download

model_weights.zipandprocessed_data.zipfrom HERE

- Download

-

Repository Setup

- Extract both ZIP files and copy the contents into the

TransPhase/directory of the current repository.

- Extract both ZIP files and copy the contents into the

-

Final File Structure

TransPhase ├── data │ ├── label_clip_emb_BABELteach.npz │ ├── meta_motion_CLIP_BABELteach_rel_train.json │ └── motion_CLIP_BABELteach_rel_train.pkl ├── evaluation │ ├── evaluation_data.csv │ └── evaluation_data.pkl ├── model │ ├── PAE/lightning_logs/version_0/checkpoints/last.ckpt │ ├── SPDM/lightning_logs/version_0/checkpoints/last.ckpt │ ├── TPDM/lightning_logs/version_0/checkpoints/last.ckpt │ ├── inv_rand_proj_15.npy │ └── rand_proj_15.npy └── utils └── SMPL_FEMALE.pkl

The input text and duration specifications can be modified directly within the demo script.

- Long-term Motion Generation

python demo_t2m_long.py- Motion Inbetweening

python demo_mib.pyWe use the SMPL-X Blender add-on to visualize the generated .npz file.

Please register at (https://smpl-x.is.tue.mpg.de), download the SMPL-X for Blender add-on, and follow the provided installation instructions.

Once installed, select Animation -> Add Animation within the SMPL-X sidebar tool, and navigate to the generated .npz file for visualization.

python -m model.PAE.trainpython -m model.SPDM.train

python -m model.TPDM.trainNote: For details on processing the BABEL-TEACH dataset, please refer to the PriorMDM data processing script and the code snippets in misc/babel.py and model/datamodule_babelteach_rel.py within this repository for more information.

To generate the evaluation output for our model, execute the following commands:

python -m evaluation.test_mib

python -m evaluation.test_t2m_pair

python -m evaluation.test_t2m_longTo run the evaluation for the motion inbetweening task, execute the following commands:

python -m evaluation.qe_mibNote: For details on evaluating on the BABEL-TEACH dataset, please refer to the PriorMDM evaluation script and PriorMDM evaluation dataloader for more information.

- SMPL/SMPL-X: For human body modeling (SMPL_Female.pkl)

- PyTorch3D: For rotation conversion utilities

- BABEL-TEACH Dataset: For motion-text paired data

- PriorMDM: For data processing and text-motion evaluation

This project is licensed under the MIT License - see the LICENSE file for details.